+ User Guide Overview

The QuantaStor Users Guide focuses on how to configure your hosts to access storage volumes (iSCSI targets) in your QuantaStor storage system. The first couple sections cover how to configure your iSCSI initiator to login and access your volumes. The second section goes over how to configure your multi-path driver.

Quick iSCSI Primer

- What is iSCSI?

- iSCSI (Internet SCSI) is a block protocol that allows you to connect block storage to your servers using over the standard TCP/IP protocol on standard Ethernet networks. After logging into an appliance to access an iSCSI device your server will see a new disk device and it will appear just in the same way as it would if you had physically added a new HDD to your server.

- What is an iSCSI Initiator?

- The server that connects to the appliance to use the iSCSI storage is the SCSI initiator. It initiates connections to the storage appliance which has one or more target devices. Each and every server has a unique initiator IQN which identifies it.

- What is an iSCSI Target?

- Each Storage Volume you create in QuantaStor is a iSCSI target. Each Storage Volume has a unique name assigned to it which is called an IQN. More specifically, it is called a target IQN and QuantaStor Storage Volumes have IQNs that always start with iqn.2009-10.com.osnexus: and look like this iqn.2009-10.com.osnexus:9c30f734-261a10ffeda36cf6:volume1

- How is iSCSI storage access controlled?

- In the QuantaStor web management interface you assign which hosts are able to access which volumes. (Traditionally this has been called "LUN Masking") In QuantaStor you simply check-box which volumes should be accessible by which hosts in the Assign Volumes.. screen.

iSCSI Initiator Configuration

Connecting to your QuantaStor (QS) storage system via the Microsoft iSCSI initiator is very straight forward. It involves 4 steps which include:

- Find the initiator IQN for the host/server you're going to assign storage to. These typically look like a URL but start with 'iqn.'

- Create a Host entry in QuantaStor to represent your server/host with it's IQN

- Assign the storage volume to the host in the QuantaStor web management interface

- Use the iSCSI initiator software on the host/server to login to the QuantaStor appliance to connect to the iSCSI storage volume you assigned to the host in the previous step.

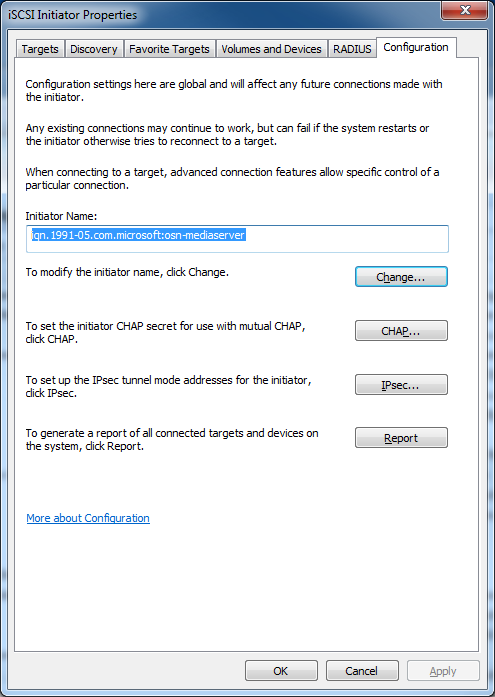

Step 1. Locating the iSCSI Initiator IQN for your Host/Server

Locate Initiator IQN: Microsoft Windows 7/8 & Windows 2008/2012 Server

On Windows hosts you'll find the host initiator in the Configuration tab after loading the iSCSI Initiator utility. You can find the utility by typing 'iSCSI' into the Search Programs and Files section that appears when you click the Windows Start icon. You'll want to select the IQN and type CTRL-C on your keyboard to copy it to the clipboard so you can easily enter the IQN into the QuantaStor Manager dialog for Host Add..

Locate Initiator IQN: Ubuntu/Debian

Most Linux based systems use the open-iscsi iSCSI initiator software which stores the IQN for the Linux server in a file located at /etc/iscsi/initiator. Here's an example of what the contents of the file looks like, you'll need to enter the IQN part which is iqn.1993-08.org.debian:01:d7eee36ce in this example to the Host Add.. screen in QuantaStor.

root@hat102:/sandbox/osn_quantastor/build# more /etc/iscsi/initiatorname.iscsi ## DO NOT EDIT OR REMOVE THIS FILE! ## If you remove this file, the iSCSI daemon will not start. ## If you change the InitiatorName, existing access control lists ## may reject this initiator. The InitiatorName must be unique ## for each iSCSI initiator. Do NOT duplicate iSCSI InitiatorNames. InitiatorName=iqn.1993-08.org.debian:01:d7eee36ce

If the iSCSI initiator software is not installed, you can install it on your Ubuntu/Debian server via this command.

sudo apt-get install open-iscsi

Once installed it will generate the /etc/iscsi/initiatorname.iscsi file for you. Note that there is some more detail on Ubuntu/Debian configuration steps here that you may find helpful.

Locate Initiator IQN: RedHat / CentOS 6.x

The iSCSI initiator tools you'll need to access iSCSI storage from you QuantaStor appliance are typically pre-installed even with the minimal server configuration but you should verify they are installed by running this command:

yum install iscsi-initiator-utils

When an iSCSI initiator logs into a storage appliance like QuantaStor it uses a special identifier called an IQN which stands for iSCSI Qualified Name. This IQN for your server can be found in a file located at /etc/iscsi/initiatorname.iscsi. Simply 'more' the file to see what your IQN is and then use this to create a Host entry in the QuantaStor web management interface to represent this host. In this example the IQN is iqn.1994-05.com.redhat:5a66857c49dc:

[root@centostest ~]# more /etc/iscsi/initiatorname.iscsi InitiatorName=iqn.1994-05.com.redhat:5a66857c49dc

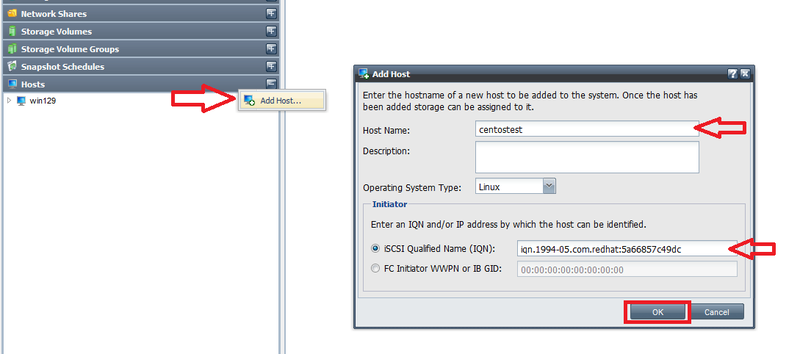

Step 2. Adding a Host entry for your server/host

Now that you know the initiator IQN for you server you must add a Host entry into QuantaStor to represent your host. QuantaStor needs this information because it restricts access to iSCSI storage volumes to only those hosts/servers you have specifically assigned the storage to.

- Note: Restricting access is critically important not just for security purposes but to prevent accessing the same iSCSI device from multiple servers at the same time which can corrupt the filesystem. As a thought experiment imagine if you could connect a hard drive to two servers at the same time. Now imaging you're installing an operating system onto that shared disk device from both servers at the same time. The two servers are not coordinating access with each other so the filesystem created by the first server will be overwritten and/or formatted by the second. It will lead to data corruption and data loss almost instantly. Similarly, you must not share iSCSI devices with more than one server unless the servers are communicating with each other and are using a "cluster-aware" file-system on the iSCSI device. Good examples of cluster-aware systems where you can safely assign iSCSI devices to multiple servers include VMware's VMFS, XenServer iSCSI/HBA SRs, CXFS, OCFS2, Apple XSan, Quantum StorNext, and others.

To do this, login to QuantaStor Manager, select the 'Hosts' section and then choose 'Add Host' from the toolbar or right-click in the Hosts section and choose 'Add Host' from the pop-up menu. Next, enter the host name and the initiator IQN for your host. The initiator IQN (iSCSI Qualified Name) you've entered is an identifier that uniquely identifies your host to the storage system. By default this name is generated for you so you'll need to go to your Windows system and run the iSCSI Initiator application to get the IQN. The initiator name will look something like this: 'iqn.1991-05.com.microsoft:yourhostname' for Windows machines and varies widely by OS type but generally starts with iqn.

- Note: The first part of the initiator name identifies the initiator vendor, in this case "iqn.1991-05.com.microsoft", the second part is typically your host name or the fully qualified domain name (FQDN) for the host (ex: yourhostname.yourdomainname.com). All IQNs start with 'iqn.' and IQNs have various restrictions on what characters you can use. So if you modify the IQN try keeping your changes to the host name portion of the IQN and only use alpha-numeric characters.

- Note: You must enter the IQN into the 'Add Hosts' dialog exactly as it is written in 'iSCSI Initiator Properties', if you make a typo then the host will not successfully login to the storage system.

Step 3. Assigning iSCSI Storage Volume(s) to your Host/Server

Now that you have a Host entry in QuantaStor for your server, you can now assign storage to it. After you have assigned one or more storage volumes to the host, return to your server to do the final iSCSI login step.

Now right-click on the newly added Host and choose 'Assign Volumes..' to assign your Storage Volumes to this Host.

- Note: You should not assign any given storage volume to more than one host unless you are using it with a cluster aware filesystem like OCFS2, CXFS, VMware VMFS, or XenServer iSCSI Software / LVHD SR. If your systems do not have a cluster filesystem and you need shared storage then a file based protocol like NFS and/or CIFS is best. For NAS storage you'll create a Network Share in QuantaStor rather than a storage volume.

Step 4. iSCSI Initiator Login to access your QuantaStor Storage Volume

Initiator Login: Linux / RedHat

Now that you've added a host entry with the IQN for your RedHat/CentOS server and assigned volumes to that host, we're now ready to do the iSCSI login to the QuantaStor system. Well, almost, here you can see my first login attempt came back and reported no portals.

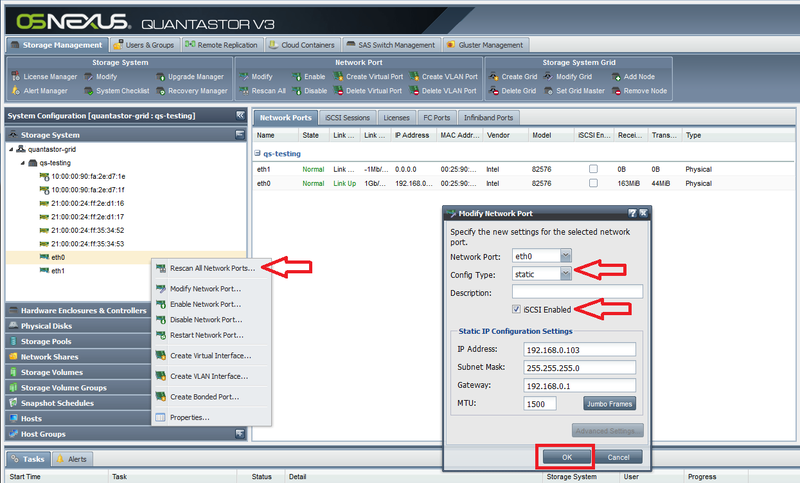

[root@centostest ~]# iscsiadm -m discovery -t sendtargets -p 192.168.0.103 Starting iscsid: [ OK ] iscsiadm: No portals found

This is because I forgot set the 'iSCSI Enabled' flag on the network interface for 192.168.0.103 in the QuantaStor appliance. See the section below on how to Enable iSCSI access on your network ports. Now that we have iSCSI enabled on the port the discovery succeeds and shows us the available iSCSI targets:

[root@centostest ~]# iscsiadm -m discovery -t sendtargets -p 192.168.0.103 192.168.0.103:3260,1 iqn.2009-10.com.osnexus:264583c5-866fb7bf39a7eb35:vol001 192.168.0.103:3260,1 iqn.2009-10.com.osnexus:264583c5-43d213d5be8bd56f:vol002

The quick way to login to all the available targets is to just add the --login option to the end of the line like so:

[root@centostest ~]# iscsiadm -m discovery -t sendtargets -p 192.168.0.103 --login 192.168.0.103:3260,1 iqn.2009-10.com.osnexus:264583c5-866fb7bf39a7eb35:vol001 192.168.0.103:3260,1 iqn.2009-10.com.osnexus:264583c5-43d213d5be8bd56f:vol002 Logging in to [iface: default, target: iqn.2009-10.com.osnexus:264583c5-866fb7bf39a7eb35:vol001, portal: 192.168.0.103,3260] (multiple) Logging in to [iface: default, target: iqn.2009-10.com.osnexus:264583c5-43d213d5be8bd56f:vol002, portal: 192.168.0.103,3260] (multiple) Login to [iface: default, target: iqn.2009-10.com.osnexus:264583c5-866fb7bf39a7eb35:vol001, portal: 192.168.0.103,3260] successful. Login to [iface: default, target: iqn.2009-10.com.osnexus:264583c5-43d213d5be8bd56f:vol002, portal: 192.168.0.103,3260] successful.

My two 40GB storage volumes now appear to the CentOS server as scsi block devices sdb and sdc:

[root@centostest ~]# more /proc/partitions major minor #blocks name 8 0 18874368 sda 8 1 512000 sda1 8 2 18361344 sda2 253 0 16293888 dm-0 253 1 2064384 dm-1 8 16 41943040 sdb 8 32 41943040 sdc

This also presents another common challenge which is determining which volume is which. The solution here is to install a tools called sg_inq like so:

yum install sg3_utils

Once installed you can now check to see some internal information about your devices including the internal ID.

[root@centostest ~]# sg_inq --page=0x83 /dev/sdc | grep specific

vendor specific: 866fb7bf39a7eb35bf97d239

The ID for this storage volume can be found in the QuantaStor web interface in the Properties page for the Storage Volume. Just look for the field marked 'Internal ID' and this ID will correlate with the device so you can tell which is which.

With the devices now identified and available you can now partition and format the devices with the filesystem of your choice just as you would with a local direct attached disk device.

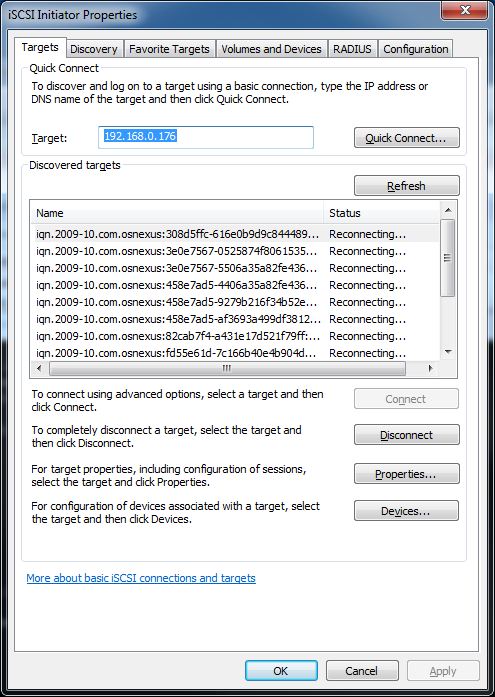

Initiator Login: Windows Server

Windows system and bring up the 'iSCSI Initiator' application again. On the first page under the 'Targets' tab, you can enter the IP address of your QuantaStor storage system in the box marked 'Target:' then press 'Quick Connect...'. After a moment you will see your storage volumes appear in the 'Discovered targets' section. When they appear simply select the storage volume you want to connect to, then press the 'Connect' button. Within a few seconds you'll be connected to the storage volume (iSCSI target). Unless this is a storage volume you've previously formatted and utilized, you'll need to use the Windows Disk Management screen to quick format the disk before you begin using it.

Initiator Login: Apple Mac OS X

We recommend using the ATTO Xtend SAN iSCSI initiator available here. We do not recommend using the globalSAN initiator with QuantaStor appliances as we have seen multiple problems with it in testing.

Once you have the IQN for your OS/X system you'll need to add a host entry to QuantaStor using the QuantaStor manager web UI. Just press the 'Add Host' button and then enter the name of your system, and enter the IQN in the box that says 'IQN'. Now that you have a host added, you can assign volumes to your Mac. After you've assigned some storage, go back to the iSCSI initiator dialog in OS/X and enter the IP address of your QuantaStor system and you should immediately see all the storage volumes listed which you have assigned to the host. Note that you'll need to partition the disk after you connect to the iSCSI device / volume. We recommend that you use 'quick format' because a full format will end up reserving blocks in the storage system which defeats the purpose of using thin-provisioning which is the default when you 'Create Storage Volume'.

Enable iSCSI Access on Network Ports

By default iSCSI access is enabled on all network ports in your QuantaStor appliance. This is typically OK if all the ports are the same speed and on the same network but in most cases you'll want to disable iSCSI access to 1GbE management ports so that all data flows through the faster 10GbE or 40GbE network ports if you have them. Even with 1GbE ports it is common to separate the management network from the SAN ports used to deliver iSCSI storage to the servers and this option allows you to control that. To enable iSCSI on your network interface in your appliance you'll need to configure it with the 'Enable iSCSI' option selected as shown in this screenshot.

Multipath IO Configuration (iSCSI & FC)

QuantaStor supports round-robin style active/active multipathing using Microsoft's MPIO and via Linux using the device-mapper multipath (dmmp) driver. Active/active multipath support not only boosts performance, it also gives you the benefit of automatic path failover when a network connection is lost to one of your NICs on the host (initiator side) or the storage system (target side).

iSCSI Multipathing should be configured using IP's on different network subnets/vlans to ensure proper communication of the paths. Using the same subnet with multiple IP's will not provide a proper multipathing config. QuantaStor will present out iSCSI communication on any iSCSI enabled interface by default, there is no additional configuration required inside QuantaStor to enable path(s) for iSCSI Multipathing other than configuring the network interface with an appropriate IP address and subnet.

e.g. the below example networking configuration would provide two network paths between the QuantaStor and the Host connecting to the QuantaStor.

QuantaStor server IP configuration 10.0.1.121/24 10.0.2.121/24 Host Client IP Configuration 10.0.1.125/24 10.0.2.125/24

Once the Network configuration is correct, the appropriate Multipathing configuration will need to be performed on the Host Client side, the rest of this section details how to configure Multipathing for the most popular client operating systems.

For Microsoft MPIO configuration you'll need to add the QuantaStor vendor / model information so that Microsoft's default DSM (device specific module) can detect QuantaStor volumes and automatically enable multipathing for them thereby combining multiple paths to your QuantaStor Storage System in a single device as seen in the Windows Disk Manager.

For Linux, you'll need to first install dmmp for your Linux distribution, and then modify the /etc/multipath.conf file to include an additional stanza so that the system properly identifies the QuantaStor devices and uses the correct multi-path device identification and path management rules.

Note: QuantaStor supports SCSI-3 Persistent Reservations so you can use it with Microsoft's Failover Clustering / Hyper-V and other cluster solutions that require SCSI-2 or SCSI-3 reservations.

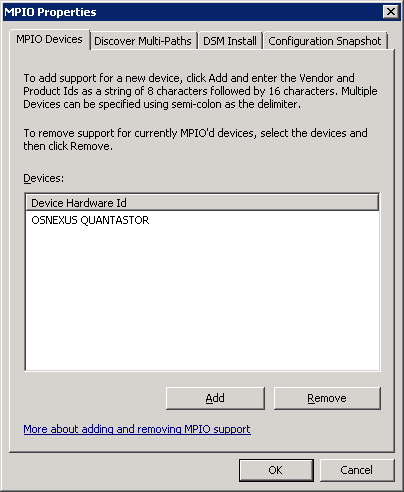

Configuring Microsoft MPIO

The first step in configuring MPIO on Windows Server is to navigate to MPIO properties. (Note that desktop versions of Windows such as XP, Vista, and Windows 7/8 do not support MPIO, you must be using Windows Server 2003/2008/2012) The easiest way to get to the MPIO configuration screen is to type MPIO in the Start menu search bar. It can also be found in the Control Panel under "MPIO".

Once you're at the MPIO dialog, select the "MPIO Devices" tab and then click the "Add" button. The string you will need to add for QuantaStor is exactly:

OSNEXUS QUANTASTOR

^ ^^^^^^

Note that the '^' characters are provided here so that you can see where the spaces are. Note also that these are all capital letters, with one space between the words, and exactly six trailing spaces after QUANTASTOR. If you don't have exactly (6) trailing spaces then the MPIO driver won't recognize the QuantaStor devices and you'll end up with multiple instances of the same disk in Windows Disk Manager.

Configuring Linux Device-Mapper Multipath (DMMP)

After you have device mapper installed on your Linux distribution, you'll need to login to your system as root and then add this section to your /etc/multipath.conf file for CentOS:

device {

vendor "OSNEXUS"

product "QUANTASTOR"

getuid_callout "/sbin/scsi_id -g -u -s /block/%n"

path_grouping_policy multibus

failback immediate

path_selector "round-robin 0"

rr_weight uniform

rr_min_io 100

path_checker tur

}

On Ubuntu/Debian the 'getuid_callout' part is a little different so use this version for Ubuntu:

device {

vendor "OSNEXUS"

product "QUANTASTOR"

getuid_callout "/lib/udev/scsi_id --whitelisted --replace-whitespace --device=/dev/%n"

path_grouping_policy multibus

failback immediate

path_selector "round-robin 0"

rr_weight uniform

rr_min_io 100

path_checker tur

}

Please Note: Starting with the 0.5 release of the linux multipath tools, an additional property "(ID_SCSI_VPD|ID_WWN|ID_SERIAL)" definition needs to be added to the definitions in the blacklist_exceptions portion of the multipath.conf file.

blacklist_exceptions {

property "(ID_SCSI_VPD|ID_WWN|ID_SERIAL)"

device {

vendor "OSNEXUS"

product "QUANTASTOR"

}

}

Once the file is updated you'll want to restart the multipath service like so:

service multipathd restart

Once you have it all configured and you're logging into the iSCSI target / storage volume with your iSCSI initiator you'll want to run 'multipath -l' to list the paths and you should see something like this:

14f534e455855530038323061623737393365306365643738dm-2 OSNEXUS,QUANTASTOR [size=512M][features=0][hwhandler=0] \_ round-robin 0 [prio=0][active] \_ 120:0:0:0 sdg 8:96 [active][undef] \_ 119:0:0:0 sdf 8:80 [active][undef] 14f534e455855530038323061623737393735393231656332dm-1 OSNEXUS,QUANTASTOR [size=4.0G][features=0][hwhandler=0] \_ round-robin 0 [prio=0][active] \_ 118:0:0:0 sde 8:64 [active][undef] \_ 117:0:0:0 sdc 8:32 [active][undef]

Remember, you'll need to make sure that you mount your filesystems using the correct unique device ID under /dev/mapper/ so that the multipath driver is utilized. If you mount your filesystem from /dev/sdXX you'll have completely bypassed the multi-path driver and will not get the performance an fail-over capabilities.

NFS Configuration

This section outlines how to setup your servers/hosts to access QuantaStor Network Shares via the NFS protocol.

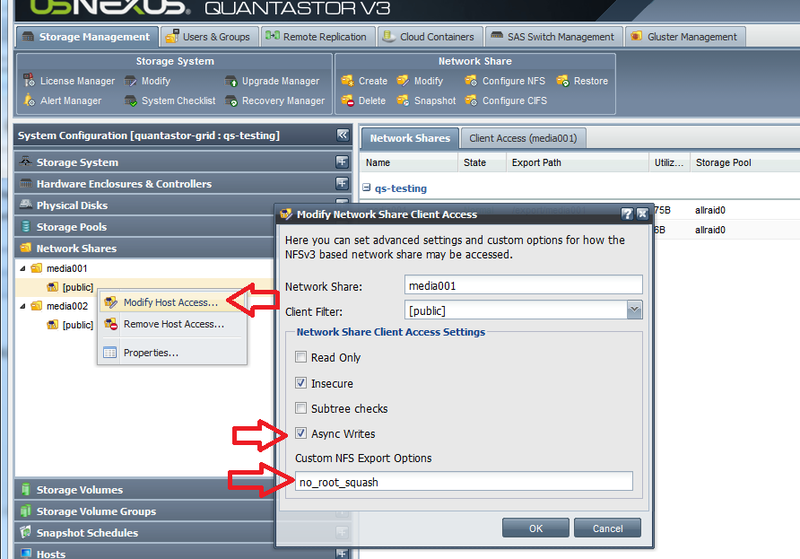

Access Control / Permissions Configuration

Unlike CIFS, access control via NFS is managed by providing access to a specific set of IP addresses. The default permissions for new Network Shares is to provide public access from all IP addresses which is shown in the QuantaStor web UI as a [public] network share client entry. The default access control configuration is also to "squash" rights from remote "root" user accounts to the "nobody" user ID as a security precaution. This may cause problems with some applications, and in those cases you can apply the 'no_root_squash' custom option to your network share. Also, if you've changed it to NFS v4 then the 'no_root_squash' option may also be required unless you've manually configured the system to use Kerberos user authentication.

no_root_squash Configuration

To configure the 'no_root_squash' option first go the the QuantaStor web management interface and expand the tree where you see Network Shares. In this example, 'media001' and under there you'll see a host access entry called \[public\]. Right-click on that and choose 'Modify Host Access..'. In this screen input no_root_squash into the Custom NFS Export Options section. This will allow you to modify files in the share. Second you'll probably want to enable 'Async Writes' which will boost performance a bit. Once you're done click 'OK'.

NFSv4 Mount Procedure

Continuing the above example, if you've switched from NFSv3 mode to NFSv4 you'll need to unmount the share if you already have it mounted then re-mount the share:

umount /media001

Now to mount the share with nfs4 mode, mount it like so:

mount -t nfs4 192.168.0.103:/media001 /media001

Note that we've added the -t nfs4 option, and second we have excluded the /export prefix to the mount path. That's important, do not try mounting a nfs4 network share with the /export prefix. It may work for awhile but you will inevitably have problems with this.

Resolving Stale File Handle issue on older RedHat / CentOS 5.x systems

We've seen some problems with the NFS client that comes with Centos 5.5 which leads to the NFS connection dropping. After which you'll see 'Stale File Handle' errors when you try to mount the share. The quick fix for this is to login to your QuantaStor system then run this command:

sudo /opt/osnexus/quantastor/bin/nfskeepalive --cron

This generates a small amount of periodic activity on the active shares so that the connections do not get dropped and you don't get stale file handles. CentOS 5.5 has the 2.6.18 kernel which is several years old now and we don't see this problem with the newer kernels used in CentOS 6.x.

RedHat / CentOS 6.x NFS Configuration

To setup your system to communicate with QuantaStor over NFS you'll first need to login as root and install a couple of packages like so:

yum install nfs-utils nfs-utils-lib

Once NFS is installed you can now mount the Network Shares that you've created in your QuantaStor system. To do this first create a directory to mount the share to. In this example our share name is 'media001'.

mkdir /media001

Now we can mount the share to that directory by providing the IP address of the Quantastor system (192.168.0.103 in this example) and the name of the share with the /export prefix like so:

mount 192.168.0.103:/export/media001 /media001

Ubuntu / Debian NFS Configuration

The process for configuring NFS with Ubuntu and Debian is the same as above only the package installation is different. To install the NFS client packages issue these commands at the console to get started then follow the same instructions given above for mounting shares for CentOS:

sudo -i apt-get update apt-get install nfs-common

VMware Configuration

Creating VMware Datastore

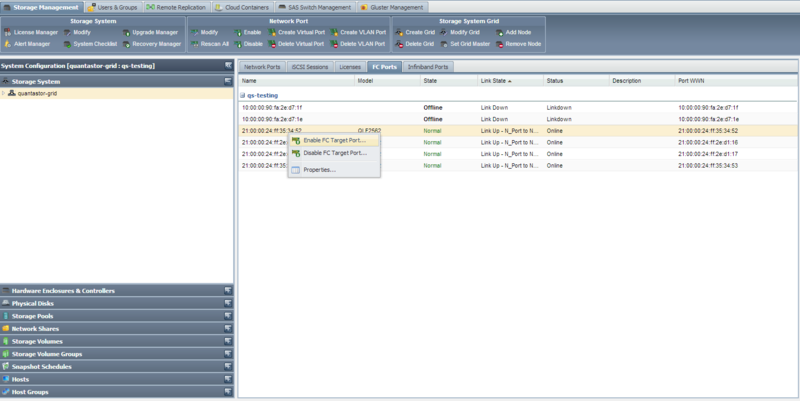

Using Fibre Channel

Setting up a datastore in VMware using fibre channel takes just a few simple steps.

- First verify that the fibre channel ports are enabled from within QuantaStor. You can enable them by right clicking on the port and selecting 'Enable FC Target Port'.

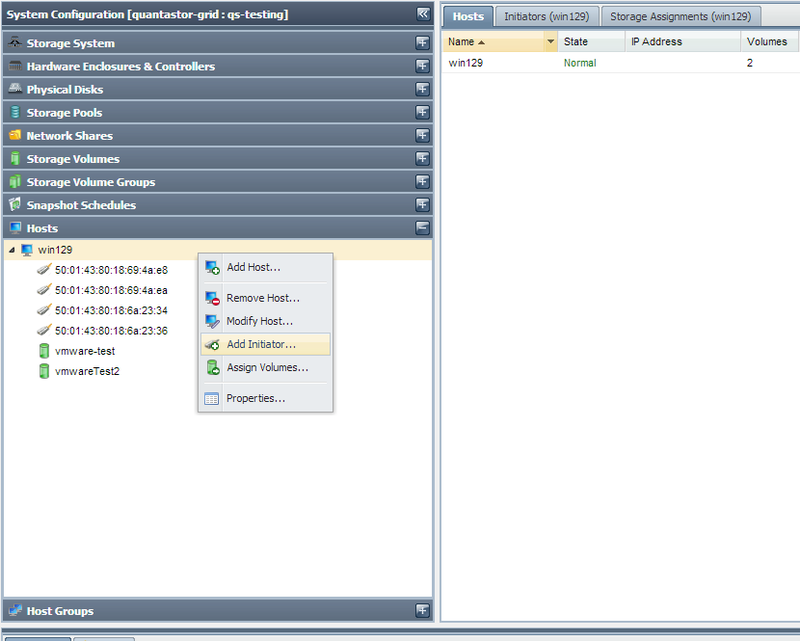

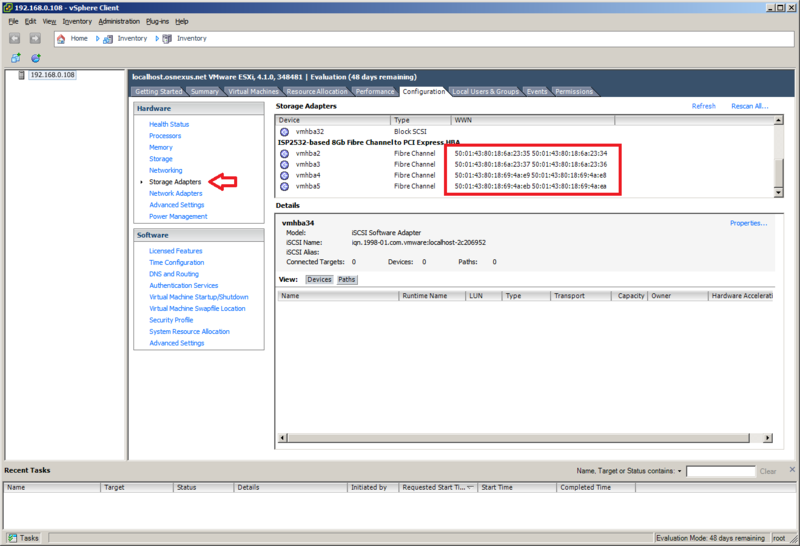

- Create a host entry within QuantaStor, and add all of the WWNs for the fibre channel adapters. To add more than one initiator to the QuantaStor host entry, right click on the host and select 'Add Initiator'. The WNNs can be found in the 'Configuration' tab, under the 'Storage Adapters' section.

- Now add the desired storage volume to the host you just created. This storage volume will be the Datastore that is within VMware.

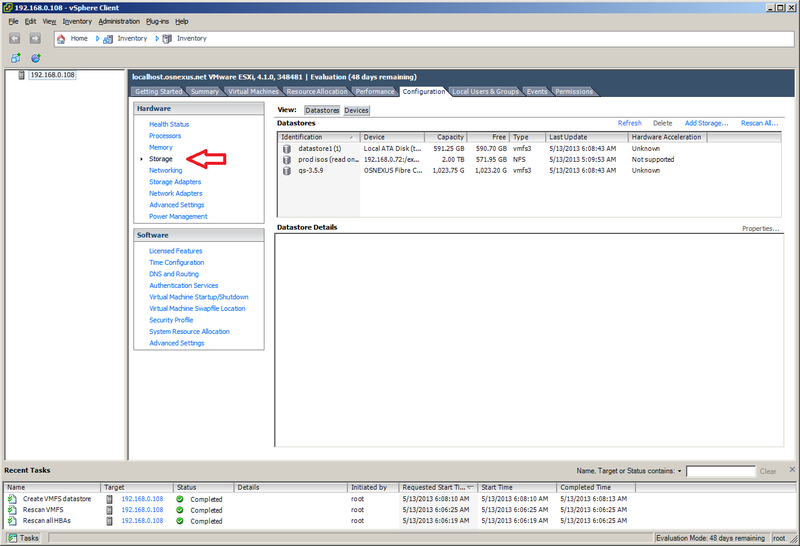

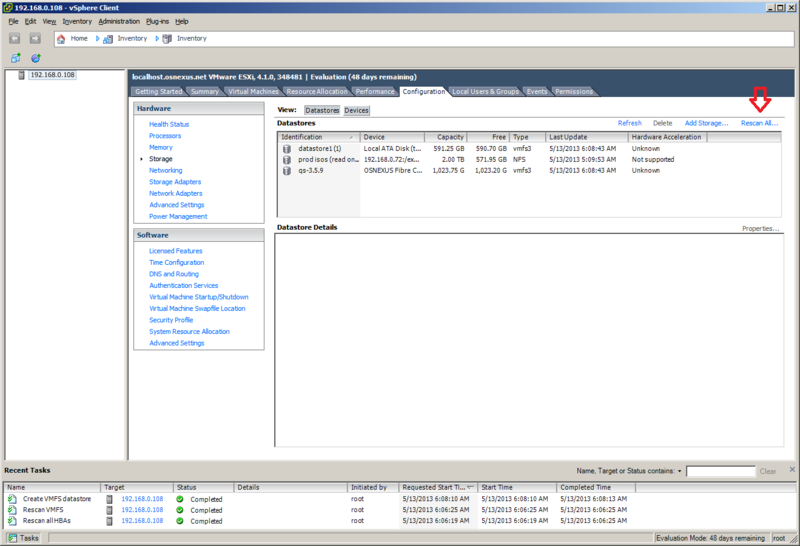

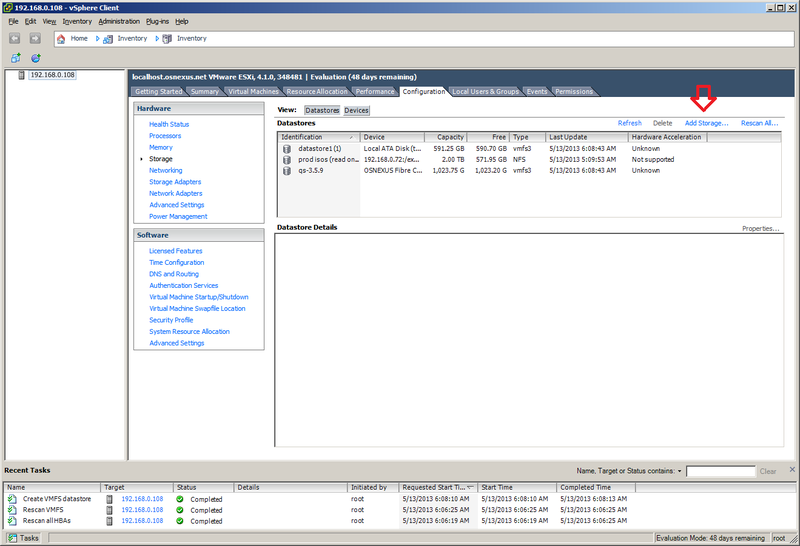

- Next, navigate to the 'Storage' section of the 'Configuration' tab. From within here we will want to select 'Rescan All...'. In the rescan window that pops up, make sure that 'Scan for New Storage Devices' is selected and click 'OK'.

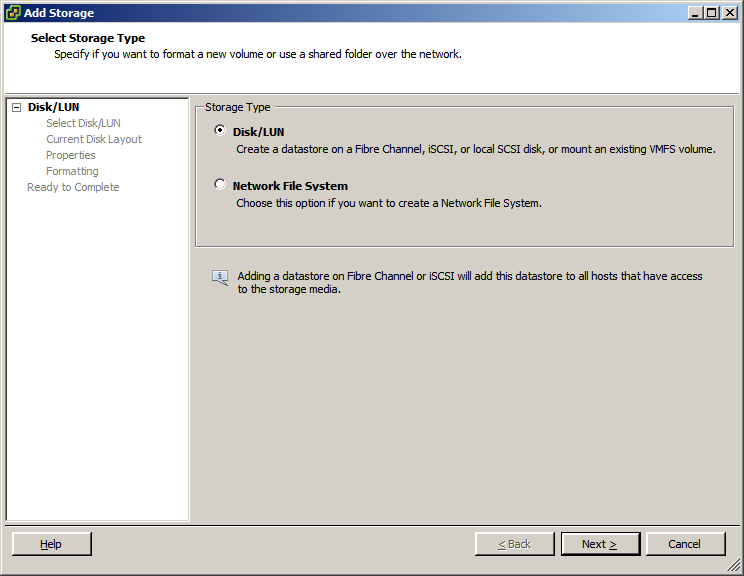

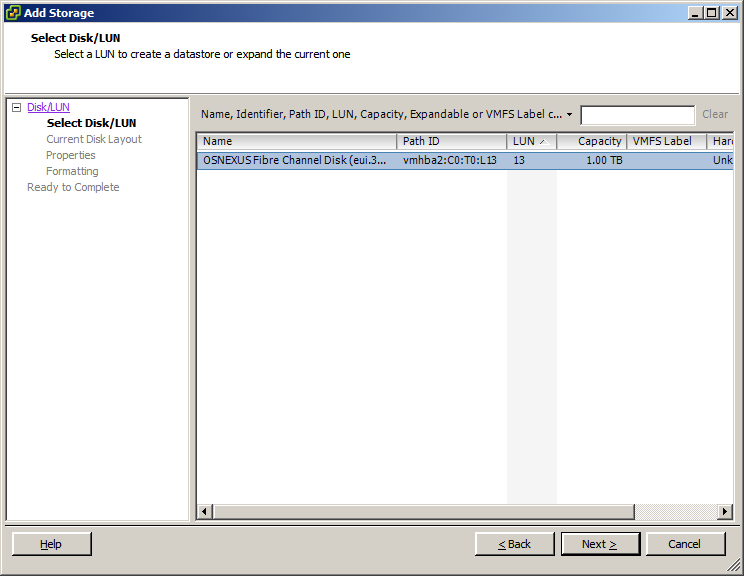

- After the scan is finished (you can see the progress of the task at the bottom of the screen), select the 'Add Storage...' option. Make sure that 'Disk/LUN' is selected and click next. If everything is configured correctly you should now see the storage volume that was assigned before. Select the storage volume and finish the wizard.

You should now be able to use the storage volume that was assigned from within QuantaStor as a datastore on the VMware server.

Using iSCSI

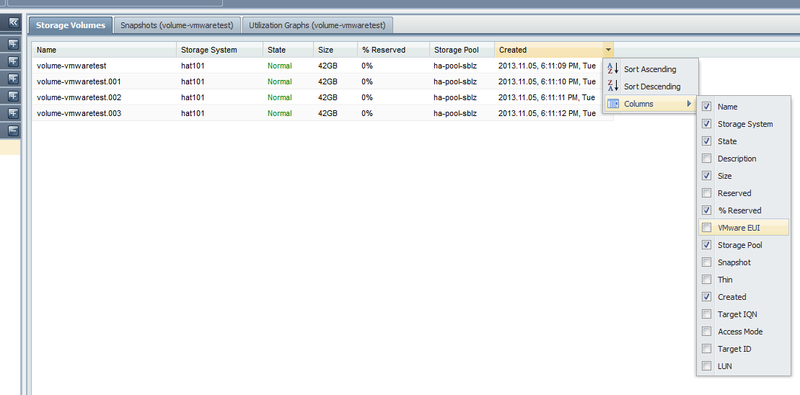

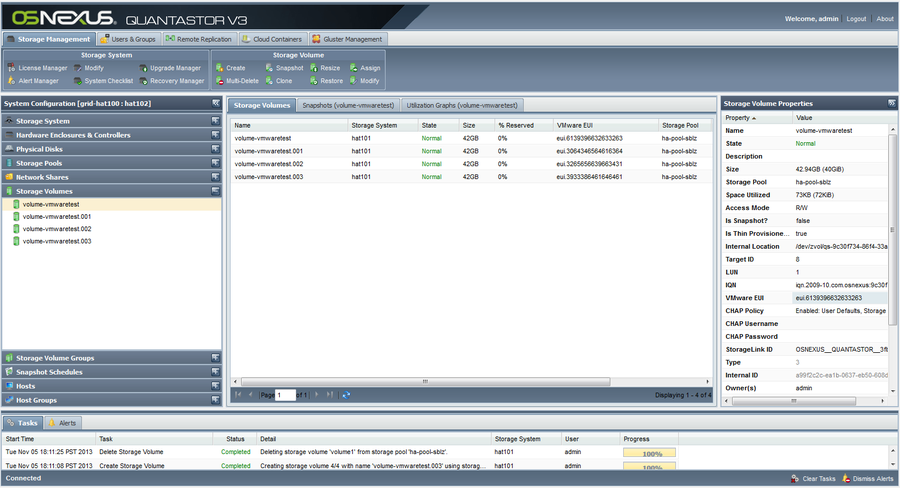

The VMware EUI unique identifier for any given volume can be found in the properties page for the Storage Volume in the QuantaStor web management interface. There is also a column you can activate as shown in this screenshot.

This screen shows a list of Storage Volumes and their associated VMware EUIs which you can use to correlate the Storage Volumes with your iSCSI device list in VMware vSphere.

Here is a video explaining the steps on how to setup a datastore using iSCSI. Creating a VMware vSphere iSCSI Datastore with QuantaStor Storage

Using NFS

Here is a video explaining the steps on how to setup a datastore using NFS. Creating a VMware datastore using an NFS share on QuantaStor Storage

XenServer Configuration

Creating XenServer Storage Repositories (SRs)

Generally speaking, there are three types of XenServer storage repositories (SRs) you can use with QuantaStor.

- iSCSI Software SR

- StorageLink SR

- NFS SR

The 'iSCSI Software SR' which allows you to assign a single QuantaStor storage volume to XenServer and then XenServer will create multiple virtual disks (VDIs) within that one storage volume/LUN. This route is easy to setup but it has some drawbacks:

**These pros and cons are outdated**

iSCSI Software SR

- Pros:

- Easy to setup

- Cons:

- The disk is formatted and laid out using LVM so the disk cannot be easily accessed outside of XenServer

- The custom LVM disk layout makes it so that you cannot easily migrate the VM to a physical machine or other hypervisors like Hyper-V & VMWare.

- No native XenServer support for hardware snapshots & cloning of VMs

- Potential for spindle contention problems which reduces performance when the system is under high load.

- Within QuantaStor manager you only see a single volume so you cannot setup custom snapshot schedules per VM and cannot roll-back a single VM from snapshot.

The second and most preferred route is to create your VMs using a Citrix StorageLink SR. With StorageLink there is a one-to-one mapping of a QuantaStor storage volume to each virtual disk (VDI) seen by each XenServer virtual machine.

Citrix StorageLink SR

- Pros:

- Each VM is on a separate LUN(s)/storage volume(s) so you can setup custom snapshot policies

- Leverage hardware snapshots and cloning to rapidly deploy large numbers of VMs

- Enables migration of VMs between XenServer and Hyper-V using Citrix StorageLink

- Enables one to setup custom off-host backup policies and scripts for each individual VM

- Easier migration of VMs between XenServer resource pools

- VM Disaster Recovery support via StorageLink (requires QuantaStor Platinum Edition)

- Cons:

- Requires a Windows host or VM to run StorageLink on.

- Some additional licensing costs to run Citrix Essentials depending on your configuration.

- (A free version exists which supports 1 storage system)

With the next version of Citrix StorageLink we'll be directly integrated so it will be just as easy to get going using the StorageLink SR if not easier as it will do all the array side configuration for you automatically.

XenServer NFS Storage Repository Setup & Configuration

XenServer iSCSI Storage Repository Setup & Configuration

Creating a XenServer Software iSCSI SR

Now that we've discussed the pros/cons, let's get down to creating a new SR. We'll start with the traditional "one big LUN" iSCSI Software SR. The name is perhaps a little misleading, but it's called a 'Software' SR because Citrix is trying to make clear that the iSCSI software initiator is utilized to connect to your storage hardware. Here's a summary of the steps you'll need to take within the QuantaStor Manager web interface before you create the SR.

- Login to QuantaStor Manager

- Create a storage volume that is large enough to fit all the VMs you're going to create.

- Note: If you use a large thin-provisioned volume that will give you the greatest flexibility.

- Add a host for each node in your XenServer resource pool

- Note: You'll want to identify each XenServer host by it's IQN. See the troubleshooting section for a picture of XenCenter showing a server's initiator IQN.

- (optional) Create a host group that combines all the Hosts

- Assign the storage volume to the host group

Alternatively you can assign the storage to each host individually but you'll save a lot of time by assigning it to a host group and it makes it easier to create more SRs down the road.

After you have the storage volume created and assigned to your XenServer hosts, you can now create the SR.

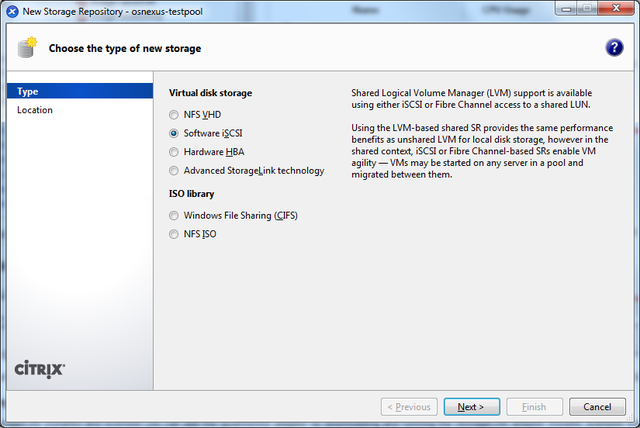

This picture shows the iSCSI Software SR type selected.

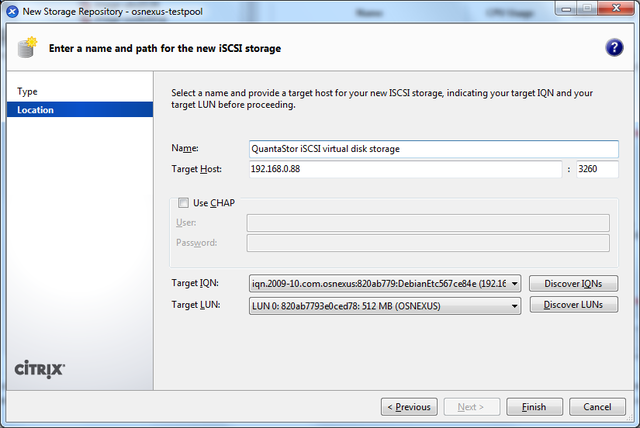

This screen captures the main page of the wizard. Enter the IP address of the QuantaStor storage system and then press the Discover Targets and Discover LUNs button to find the available devices. Once selected, press OK to complete the mapping.

Optimal XenServer iSCSI timeout settings

Since the virtual machines on the XenServer will be using the iSCSI SR for their boot device, it is important to change the timeout intervals so that the iSCSI layer has several chances to try to re-establish a session and so that commands are not quickly requeued to the SCSI layer. This is especially important in environments with potential for high latency.

Per the README from open-iSCSI, we recommend the following settings in the iscsid.conf file for a XenServer.

- Turn off iSCSI pings by setting:

- node.conn[0].timeo.noop_out_interval = 0

- node.conn[0].timeo.noop_out_timeout = 0

- Set the replacement_timer to a very long value:

- node.session.timeo.replacement_timeout = 86400

Installing the XenServer multipath configuration settings

XenServer utilizes the Linux device-mapper multipath driver (dmmp) to enable support for hardware multi-pathing. The dmmp driver has a configuration file called multipath.conf that contains the multipath mode and failover rules for each vendor. There are some special rules that need to be added to that file for QuantaStor as well. We're working closely with Citrix to get this integrated so that everything is pre-installed with their next product release but until then, the following changes will need to be implemented. You'll need to edit the /etc/multipath-enabled.conf file located on each of your XenServer dom0 nodes. In that file, just find the last device { } section and add this additional one for QuantaStor:

device {

vendor "OSNEXUS"

product "QUANTASTOR"

getuid_callout "/sbin/scsi_id -g -u -s /block/%n"

path_selector "round-robin 0"

path_grouping_policy multibus

failback immediate

rr_weight uniform

rr_min_io 100

path_checker tur

}

- A correctly configured multipath-enabled.conf file including the above changes can be downloaded from our website: multipath-enabled.conf

- Using the wget command the file can be downloaded directly from your Xen Server. We suggest you save a copy of your original file.

XenServer StorageLink Storage Repository Setup & Configuration

The following notes on StorageLink support only apply to XenServer version 6.x. Starting with version 2.7 QuantaStor now has an adapter to work with the StorageLink features that have been integrated with XenServer.

Installing the QuantaStor Adapter for Citrix StorageLink

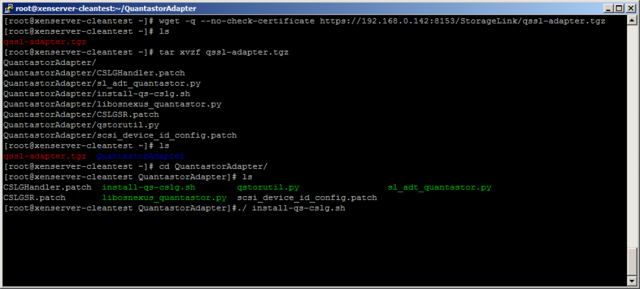

To install the adapter takes just a few simple steps. First, get the files from the QuantaStor box. To do this you can use the command below with the ip address of your QuantaStor system:

wget -q --no-check-certificate https://<quantastor-ip-address>:8153/StorageLink/qssl-adapter.tgz

You can also get the file by downloading it using the following command. This is recommended if you're using bonding as this version includes a fix for that. (Note: QuantaStor v3.6 will include the updated StorageLink tar file, so the above command will work for that version)

wget http://packages.osnexus.com/patch/qssl-adapter.tgz

Next, unpack the .tgz file returned by running the command:

tar zxvf qssl-adapter.tgz

After the file is unpacked, cd into the directory.

cd QuantastorAdapter/

Now you can run the installer file. This should copy the adapter files to the correct directories, along with patching a few minor changes to the StorageLink files. You can run the installer with the command:

./install-qs-cslg.sh

NOTE: These steps must be done for all of the Xenserver hosts that are in the pool.

QuantaStor Utility Script

The installer also comes with a file named qstorutil.py. This file can be executed with specific command line arguments to discover volumes, create storage repositories, and introduce preexisting volumes. The file is also copied to “/usr/bin/qstorutil.py” during the installation of the QuantaStor adapter for easier access.

The script has a help page with examples that can be accessed via: qstorutil.py --help

While the script is the easiest way to create storage repositories or introduce volumes, the adapter will work just fine using the xe commands. We provide the qstorutil.py to make the configuration simpler due to the large number of somewhat complex arguments required by xe.

Creating XenServer Storage Repositories (Srs)

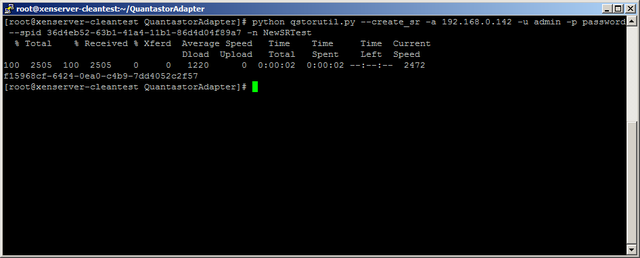

The easiest way to create a XenServer storage repository is to use the QuantaStor utility script. To do this you can run either of the commands below:

qstorutil.py --create_sr -a <quantastor-ip-address> -u admin -p password --spid <quantastor-storage-pool-uuid> -n <sr-name>

qstorutil.py --create_sr -a <quantastor-ip-address> -u admin -p password --sp_name <quantastor-storage-pool-name> -n <sr-name>

Both of these commands will create a storage repository. One takes the QuantaStor spid where as the other takes the QuantaStor storage pool name (and uses it to figure out the spid). When you create a storage repository, a small metadata volume is created. The metadata volume name with have the prefix “SL_XenStorage__” and then a guid.

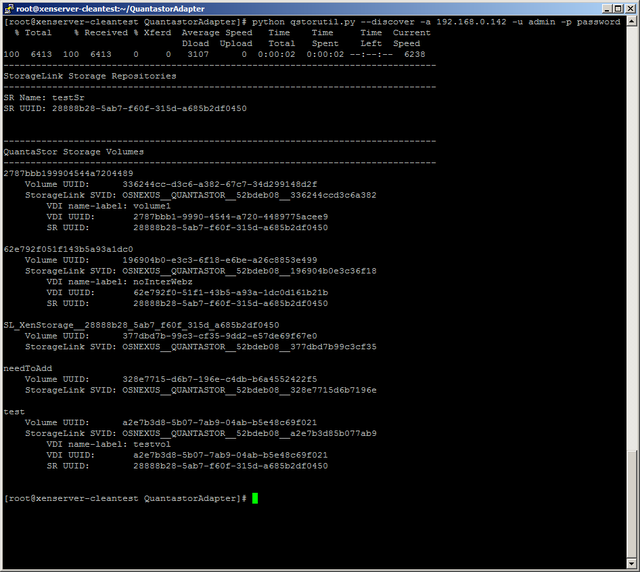

Storage Volume Discovery

Storage volume discovery will show you a list of all the volumes on the QuantaStor box specified (via ip address). The list will specify the name of the volume, the QuantaStor id of the volume, the StorageLink id of the volume, the storage system name, and the storage system id. If the volume is in a storage repository on the local XenServer system it will also have three additional fields. These fields are the VDI name, the VDI uuid, and the storage repository uuid.

In addition to listing all of the volumes, the discover command will also print out the storage repositories on the local XenServer system. The fields listed are the storage repository name, and the storage repository uuid.

To call the discovery command you can use the command below:

qstorutil.py --discover -a <quantastor-ip-address> -u admin -p password

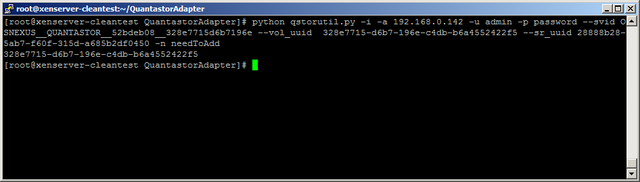

Introducing a Preexisting Volume to XenServer

To introduce a preexisting volume to XenServer you should first run the storage volume discovery command. To introduce a volume run one of the three commands below:

qstorutil.py --vdi_introduce -a <quantastor-ip-address> -u admin -p password --vol_uuid <quantastor-volume-uuid> --sr_uuid <storage-repository-uuid> -n <vol-name>

qstorutil.py --vdi_introduce -a <quantastor-ip-address> -u admin -p password --svid <storagelink-volume-id> --sr_uuid <storage-repository-uuid> -n <vol-name>

qstorutil.py --vdi_introduce --svid <storagelink-volume-id> --vol_uuid <quantastor-volume-uuid> --sr_uuid <storage-repository-uuid> -n <vol-name>

Each of these commands do the exact same thing, they just require slightly different parameters. Use whichever command works best for you. The reason for running the discover command before introducing a volume is that the volume vol_uuid, svid, and sr_uuid are all provided in the discover.

Virtual Storage Appliance iSCSI Configuration

With the rapid increase in storage density and compute power the whole role of a storage system is becoming just another workload, and this is what QuantaStor is ultimately focused on enabling with our Software Defined Storage platform. This section outlines how to setup QuantaStor as a virtual machine a.k.a. Virtual Storage Appliance (VSA) so that you can deliver dedicated virtual appliances to your internal customers. VSAs can get their storage from DAS in the server where the VM is running or via NAS or SAN storage. In this section we'll go over how to configure a VSA to get storage over iSCSI which it can then utilize to create a pool of storage for sharing out over SAN/NAS protocols.

Configuring Your SAN for the VSA

You can use SAN storage from any physical storage system (QuantaStor, EqualLogic, etc) with your VSA and the configuration steps will vary according to the vendor. Also, for the purposes of this article we'll call the physical storage appliance (PSA) that's delivering storage to the VSA a physical storage appliance or PSA for short.

Configuration Summary

Assuming you're using QuantaStor for your PSA you'll need to create a Storage Volume, add a Host entry for the VSA and then assign the storage to the IQN of the VSA. Within the VSA we'll also need to setup the iSCSI initiator software, configure it, then set it up to automatically login to your QuantaStor PSA at boot time.

Installing the iSCSI Initiator service on the VSA

We're assuming that you have a VSA setup and booted at this point but have not yet created any storage pools because there's no storage yet delivered from the PSA to the VSA. So the first step is to install the open-iscsi initiator tools like so:

sudo apt-get install open-iscsi

Next you'll need to check to see what the IQN is for your VSA by running this command:

more /etc/iscsi/initiatorname.iscsi

The IQN will look something like this:

InitiatorName=iqn.1993-08.org.debian:01:5463d5881ec2

You can use that IQN as-is or modify this to make an IQN that better identifies your VSA system if you like but this is optional. For this example we'll use this as the VSA's initiator IQN:

InitiatorName=iqn.2012-01.com.quantastor:qstorvsa001

Adding a Host entry for the VSA into the PSA

The IQN we set for the VSA is 'iqn.2012-01.com.quantastor:qstorvsa001' in this example and we'll need to add this to a new host entry in the PSA so that we can assign storage to the VSA from the PSA. In your QuantaStor system just select the Hosts section then right-click, choose 'Add Host' and add host with its IQN. Once the Host entry has been added for the VSA you'll need to assign a storage volume to it. We'll next use the iSCSI initiator on the VSA to login to the PSA so that the VSA can consume that storage volume.

If you're having trouble getting the PSA configured remember that within the QuantaStor web management screen there's a 'System Checklist' button in the toolbar that will walk you through all of these configuration steps.

Logging into the PSA target storage volume from the VSA

Now that the tools are installed, you've created a Storage Volume in the PSA, and assigned it to the VSA host entry in the PSA you can now login to access that storage. The easy way to do this is to run this command but substitute the IP address with the IP address of the PSA:

iscsiadm -m discovery -t st -p 192.168.0.137

This will have output that looks something like this:

192.168.0.137:3260,1 iqn.2009-10.com.osnexus:cd4c0fb9-bddfb4774939e62a:volume0

To login to all the volumes you've assigned to the VSA you can run this simple command:

iscsiadm -m discovery -t st -p 192.168.0.137 --login

The output from that command looks like this:

192.168.0.137:3260,1 iqn.2009-10.com.osnexus:cd4c0fb9-bddfb4774939e62a:volume0 Logging in to [iface: default, target: iqn.2009-10.com.osnexus:cd4c0fb9-bddfb4774939e62a:volume0, portal: 192.168.0.137,3260] Login to [iface: default, target: iqn.2009-10.com.osnexus:cd4c0fb9-bddfb4774939e62a:volume0, portal: 192.168.0.137,3260]: successful

You have now logged into the storage volume on the PSA and your VSA now has additional storage it can access to create storage pools from which it can in turn deliver iSCSI storage volumes, network shares and more.

Verifying that the Storage is Available

To verify that the storage is available you can run this command which will show you that your VSA now has a QuantaStor device attached:

more /proc/scsi/scsi

Attached devices: Host: scsi1 Channel: 00 Id: 00 Lun: 00 Vendor: VBOX Model: CD-ROM Rev: 1.0 Type: CD-ROM ANSI SCSI revision: 05 Host: scsi4 Channel: 00 Id: 00 Lun: 00 Vendor: OSNEXUS Model: QUANTASTOR Rev: 300 Type: Direct-Access ANSI SCSI revision: 06

Importing the Storage into QuantaStor

Now that the storage has arrived to the appliance you'll need to use the 'Scan for Disks...' dialog within your QuantaStor VSA to make the storage appear. Once it appears you can create a storage pool out of it and start using it.

Persistent Logins / Automatic iSCSI Login after Reboot

Last but not least you'll need to setup your VSA so that it connects to the PSA automatically at system startup. The easist way to do this is to edit the /etc/iscsi/iscsid.conf file to enable automatic logins. The default is 'manual' so you'll need to change that to 'automatic' so that it looks like this:

#***************** # Startup settings #***************** # To request that the iscsi initd scripts startup a session set to "automatic". node.startup = automatic

Alternatively you could script a line in your /etc/rc.local file to login just as you did above to initially setup the VSA:

iscsiadm -m discovery -t st -p 192.168.0.137 --login

XenServer NIC Optimization

For those using XenServer you may see a nice boost in performance by replacing the default Realtek driver with the E1000 driver which has 1GbE support. Have a look at this article over at www.netservers.co.uk which outlines the hack.

Troubleshooting

If you had problems with the above you might try restarting the iSCSI initiator service with 'service iscsi-network-interface restart'. Do not restart the 'iscsi-target' service as that's the service that's serving targets from your VSA out to the hosts consuming the VSA storage. Note also that if you restart the 'iscsi-target' service by mistake you'll need to restart the 'quantastor' service to re-expose the iSCSI targets for that system.