Difference between revisions of "Scale-out Block Setup (ceph)"

(Created page with "== Scale-out iSCSI Block Storage (Ceph based) == === Minimum Hardware Requirements === * 3x QuantaStor storage appliances minimum (up to 64x appliances) * 1x write endurance...") |

m |

||

| Line 32: | Line 32: | ||

[[File:osn_ceph_workflow2.png|700px]] | [[File:osn_ceph_workflow2.png|700px]] | ||

| + | |||

| + | |||

| + | {{Template:CephTerminology}} | ||

Revision as of 02:35, 2 March 2016

Scale-out iSCSI Block Storage (Ceph based)

Minimum Hardware Requirements

- 3x QuantaStor storage appliances minimum (up to 64x appliances)

- 1x write endurance SSD device per appliance to make journal devices from. Have 1x SSD device for each 4x Ceph OSDs.

- 5x to 100x HDDs or SSD for data storage per appliance

- 1x hardware RAID controller for OSDs (SAS HBA can also be used but RAID is faster)

Setup Process

- Login to the QuantaStor web management interface on each appliance

- Add your license keys, one unique key for each appliance

- Setup static IP addresses on each node (DHCP is the default and should only be used to get the appliance initially setup)

- Right-click on the storage system, choose 'Modify Storage System..' and set the DNS IP address (eg: 8.8.8.8), and the NTP server IP address (important!)

- Setup separate front-end and back-end network ports (eg eth0 = 10.0.4.5/16, eth1 = 10.55.4.5/16) for iSCSI and Ceph traffic respectively

- Create a Grid out of the 3 appliances (use Create Grid then add the other two nodes using the Add Grid Node dialog)

- Create hardware RAID5 units using 5 disks per RAID unit (4d + 1p) on each node until all HDDs have been used (see Hardware Enclosures & Controllers section for Create Hardware RAID Unit)

- Create Ceph Cluster using all the appliances in your grid that will be part of the Ceph cluster, in this example of 3 appliances you'll select all three.

- Use OSD Multi-Create to setup all the storage, in that dialog you'll select some SSDs to be used as Journal Devices and the HDDs to be used for data storage across the cluster nodes. Once selected click OK and QuantaStor will do the rest.

- Create a scale-out storage pool by going to the Scale-out Block Storage section, then choose the Ceph Storage Pool section, then create a pool. It will automatically select all the available OSDs.

- Create a scale-out block storage device (RBD / RADOS Block Device) by choosing the 'Create Block Device/RBD' or by going to the Storage Management tab and choosing 'Create Storage Volume' and then select your Ceph Pool created in the previous step.

At this point everything is configured and a block device has been provisioned. To assign that block device to a host for access via iSCSI, you'll follow the same steps as you would for non-scale-out Storage Volumes.

- Go to the Hosts section then choose Add Host and enter the Initiator IQN or WWPN of the host that will be accessing the block storage.

- Right-click on the Host and choose Assign Volumes... to assign the scale-out storage volume(s) to the host.

Repeat the Storage Volume Create and Assign Volumes steps to provision additional storage and to assign it to one or more hosts.

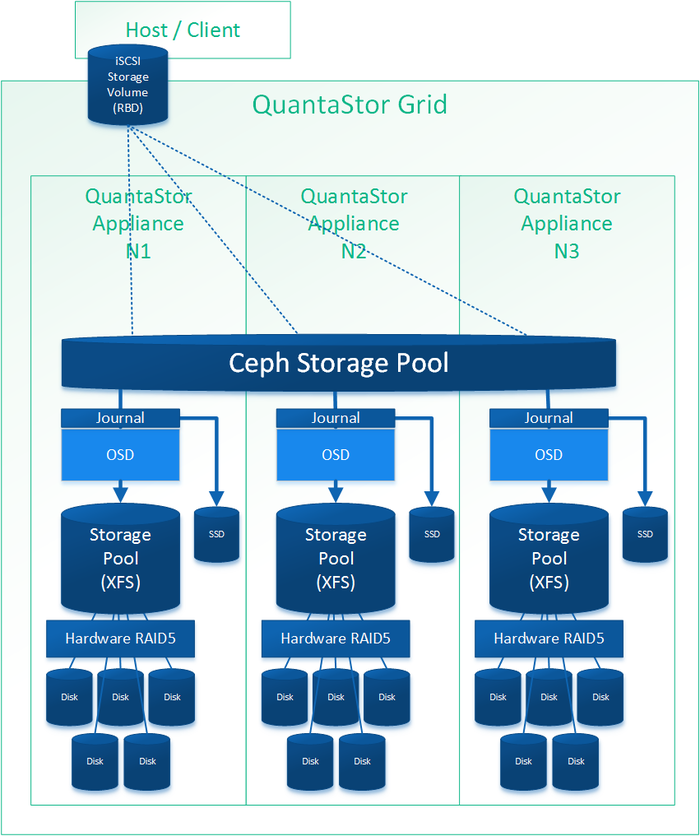

Diagram of Completed Configuration

Terminology

What is a Ceph Cluster?

A ceph cluster is a group of three or more appliances that have been clustered together using the ceph storage technology. When a cluster is initially created QuantaStor configures the first three appliances to have active Ceph Monitor services running. On configurations with more than 16 nodes two additional monitors should be setup can this can be done through the QuantaStor WUI in the Scale-out Block & Object section. Note that when the Ceph Cluster is initially created there is no storage associated with it (OSDs), only monitors.

What is a Ceph Monitor?

The Ceph Monitors form a paxos part-time parliment cluster for the management of cluster membership, configuration information, and state. Paxos is an algorithm (developed by Leslie Lamport in the late 80s) which uses a three-phase consensus protocol to ensure that cluster updates can be done in a fault-tolerant timely fashion even in the event of a node outage or node that is acting improperly. Ceph uses the algorithm so that the membership, configuration and state information is updated safely across the cluster in an efficient manner. Since the algorithm requires a quorum of nodes to agree on any given change an odd number of appliances (three or more) are required for any given Ceph cluster deployment. Monitors startup automatically when the appliance starts and the status and health of monitors is monitored by QuantaStor and displayed in the web UI. A minimum of two ceph monitors must be online at all times so in a three node configuration two of the three appliances must be online for the storage to be online and available. In configurations with more than 16 nodes 5x or more monitors should be deployed (additional monitors can be deployed via the QuantaStor Web UI).

What is an OSD?

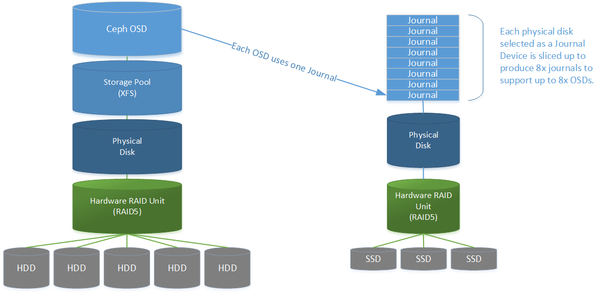

OSD stands for Ceph Object Storage Daemon and it's a Ceph daemon process that reads and writes data. Each Ceph cluster in QuantaStor must have at least 9x OSDs (3x OSDs per appliance) and each OSD is attached to a single Storage Pool. When a client writes data to a Ceph Pool (whether it's via a iSCSI/RBD block device or via the S3/SWIFT gateway) the data is spread out across the OSDs in the cluster. QuantaStor OSDs are always attached to XFS based Storage Pools and are always assigned a journal device. Because the creation of OSDs, the underlying Storage Pools for them, and their associated Journal devices is a multi-step process QuantaStor has a Multi-OSD Create configuration dialog which does all of these configuration steps for an entire cluster in a single dialog. This makes it easy to setup even hyper-scale Ceph deployments in minutes.

What is a Journal Device?

Writes are never cached, and that's important. This is good because it ensures that every write is written to stable media (the disk devices) before Ceph acknowledges to a client that the write is complete. In the event of a power outage while a write is in flight there is no problem as the write is only complete once it's on stable media. The cluster will work around the bad node until it comes back online and re-synchronizes with the cluster. The down side to never caching writes is performance. HDDs are slow due to rotational latency and seek times for spinning disk are high. The solution is to log every write to fast persistent solid state media (SSD, NVMe, X Point, NVDIMM, etc) which is called a journal device. Once written to the journal Ceph can return to the client that the write is "complete" (event though it has not been written out to the slow HDDs) since at that point the data can be recovered automatically from the journal device in the event of a power outage. A second copy of the data is held in RAM and is used to lazily write the data out to disk. In this way the journal device is only used as a write log but never needs to be re-read from. Datacenter grade or Enterprise grade SSDs must be used for Ceph journal devices. Desktop SSD devices are fast for a few seconds then write performance drops significantly, they wear out very quickly, and OSNEXUS will not certify the use of desktop SSD in any production deployment of any kind. By example, we tested with a popular desktop SSD device which produces 600MB/sec but performance quickly dropped to just 30MB/sec after just a few seconds of sustained write load.

Within QuantaStor any block device in the Physical Disks section can be turned into a Journal Device but only high performance write-endurance enterprise SSD, NVMe or PCI SSD devices should be used. A device that is assigned to be a Journal Device is sliced up into 8x journal partitions so that up to 8x OSDs can be supported by the Journal Device. For an appliance with 20x OSDs one would want at least 3x SSDs but in practice one would use more SSDs to ensure even distribution of load across the Journal Devices. Note that with a hardware RAID controller multiple SSDs can be combined using RAID5 to make a high-performance fault-tolerant journal. We recommend using PCI SSD, NVMe devices, multiple SSDs in RAID5 or a pair in RAID1. One can also use a dedicated RAID controller for the RAID5 based SSD journal devices to further boost performance. Again, each of these logical or physical devices is sliced up into 8x journal partitions so that up to 8x OSDs can be supported per Journal Device.

What is a Placement Group (PG)?

Placement groups effectively implement mirroring (or erasure coding) of data across OSDs according to the configured replica count for a given Ceph Pool. When a Ceph Pool is created the user specifies how many copies of the data must be maintained by that pool to ensure a given level of high-availability and fault-tolerance (usually 2 copies when using hardware RAID or 3 copies otherwise). Ceph in turn creates a series of Placement Groups as directed by QuantaStor to be associated with the Ceph Pool. Think of the placement groups as logical mini-mirrors in a RAID10 configuration. Each placement group is either a two-way, three-way or 4-way mirror across 2, 3, or 4 OSDs respectively. Since the number of OSDs will grow over the life of the cluster QuantaStor allocates a large number of PGs for each Ceph Pool to facilitate even distribution of data across OSDs and for future expansion as OSDs are added. In this way Ceph can very efficiently re-organize and re-balance PGs to mirror across new OSDs as they are added. (the PG count stays fixed as OSDs are added but one can run a maintenance command to increase the PG count for a Ceph Pool if the PG count gets low relative to the number of OSDs in the Pool. In general the PG count should be roughly 10x to 100x higher than the OSD count for a given Ceph Pool). Just like in RAID10 technology a PG can become degraded if one or more copies is offline and Ceph is designed to keep running even in a degraded state so that whole systems can go offline without any disruption to clients accessing the cluster. Ceph also automatically repairs and updates the offline PGs once the offline OSDs come back online online and if the offline appliance doesn't come back online in a reasonable amount of time the cluster will auto heal itself by adjusting the PGs swap out the offline OSDs with good online OSDs. In this way a cluster will automatically heal a Ceph Pool back to 100% automatically. Also, if an OSD is explicitly removed, the PGs referencing it are re-balanced and re-organized across the remaining OSDs to recover the system back to 100% health on the remaining OSDs.

What is a Object Storage Group?

S3/SWIFT object storage gateways require the creation and management of several Ceph Pools which together represent a region+zone for the storage of objects and buckets. QuantaStor groups all the Ceph Pools used to manage a given object storage configuration into what is called a Object Storage Group. QuantaStor also automatically deploys and manages Ceph S3/SWIFT Object Gateways on all appliances in the cluster that were selected as gateway nodes when the Object Storage Group was created (additional gateways can be deployed on new or existing nodes at any time). Note that Object Storage Groups are a QuantaStor construct so you won't find documentation about it in general Ceph documentation.

What are User Object Access Entries?

Access to object storage via S3 and SWIFT requires a Access Key and a Secret Key just like with Amazon S3 storage. Each User Object Access Entry is a Access Key + Secret Key pair which is associated with a Ceph Cluster and Object Storage Group. You must allocate at least one User Object Access Entry to read/write buckets and objects to an Object Storage Group via the Ceph S3/SWIFT Gateway.

What is a Resource Domain? / Understanding CRUSH Maps

Ceph has the ability to organize the placement groups (which mirror data across OSDs) so that the PGs mirror across OSDs in such a way that high-availability and fault-tolerance is maintained even in the event of a rack or site outage. A rack of appliances, a site, or building each represent a failure-domain and given the information about where appliances are deployed (site, rack, chassis, etc) a map can be created so that the PGs are intelligently laid out to ensure high-availability in the event of an outage of one or more failure-domains depending on the level of redundancy. This intelligent map of how to mirror the data to ensure optimal performance and availability is called a Ceph CRUSH (Controlled, Scalable, Decentralized Placement of Replicated Data) map. QuantaStor creates and sets up CRUSH maps automatically so that administrators do not need to manually set the up, a process that can be quite complex. To facilitate automatic CRUSH map management one must input some information which tells where the QuantaStor appliances are deployed. This is done by creating a tree of Resource Domains via the Web UI (or via CLI/REST APIs) to organize the appliances in a given QuantaStor grid into sites, buildings and racks. Using this information QuantaStor automatically generates the best CRUSH map automatically when pools are provisioned to ensure optimal performance and high-availability. Custom CRUSH map changes can still be made to adjust the map after the pool(s) are created and OSNEXUS provides consulting services to meet special requirements. Resource Domains are a QuantaStor construct so you will not find mention of them in general Ceph documentation but they map closely to the CRUSH bucket hierarchy.