Difference between revisions of "VMware Configuration"

m (→VMware Tuning - RX/TX Frame Size) |

m (→Performance Tuning) |

||

| Line 183: | Line 183: | ||

Description: Maximum Software iSCSI I/O size (in KB) (REQUIRES REBOOT!) | Description: Maximum Software iSCSI I/O size (in KB) (REQUIRES REBOOT!) | ||

</pre> | </pre> | ||

| + | |||

| + | === Credits === | ||

| + | Special thank you to C. Selfridge for expert input and his shared expertise on tuning VMware that directly helped produce this article. | ||

Revision as of 18:21, 13 May 2023

Contents

- 1 Storage Volume Block Size Selection

- 2 Creating VMware Datastore

- 3 Performance Tuning

- 3.1 Network Tuning - LACP vs Round-Robin Bonding

- 3.2 Network Tuning - Multiple Subnets

- 3.3 Network Tuning - Jumbo Frames / MTU 9000

- 3.4 Hardware Tuning - Firmware Updates

- 3.5 Hardware Tuning - BIOS Power Management Mode

- 3.6 VMware Tuning - RX/TX Frame Size

- 3.7 VMware Tuning - iSCSI MaxIoSizeKB

- 3.8 Credits

Storage Volume Block Size Selection

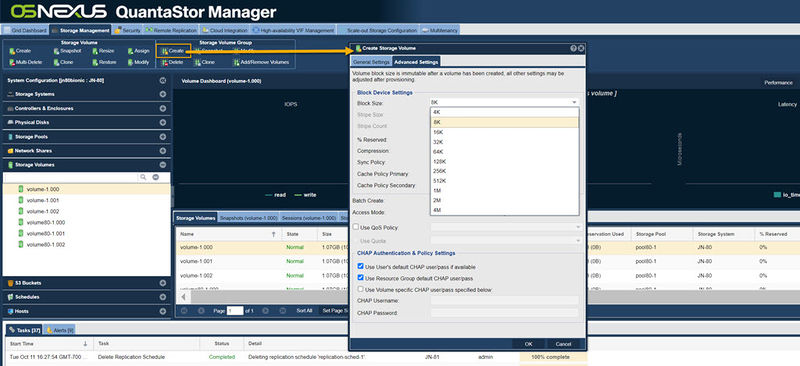

With VMware VMFS v6 changes have been introduced which limit the valid block sizes for VMware DataStores to QuantaStor Storage Volumes to a range between 8K and 64K block size. As such all Storage Volumes allocated for use as VMware Datastores should be allocated with a 8K, 16K, 32K, or 64K block size. This range of block sizes is also compatible with newer and older versions of VMware including VMFS 5.

Maximizing IOPS

Our general recommendation for VMware Datastores has traditionally been 8K so as to maximize IOPS for databases but this has been shown to have a higher level of overhead/padding which can reduce overall usable capacity. For this reason and due to solid performance in field with the larger 64K block size we recommend the larger 64K block size for general server and desktop virtualization workloads. That said, for databases one may be better served with sticking with an 8K block size or having the iSCSI/FC storage accessed directly from database server guest VMs rather than virtualized at the Datastore layer.

Maximizing Throughput

For maximum throughput we recommend using the largest supported block size which is 64K. This will also give solid IOPS performance for most VM workloads and aligns well with VMware's SFB layout.

Selecting Storage Volume Block Size

As noted above, we recommend selecting the 64K block size for your VMware Datastores though 8K, 16K and 32K are also viable options which could yield some improvement in IOPS for your workload at the cost of throughput and additional space overhead. NOTE: The block size cannot be changed after the Storage Volume has been created. Be sure to select the correct block size up-front else one will need to allocate a new Storage Volume with the correct size and may need to then VMware Live Migrate VMs from one Storage Volume to another to switch over.

Creating VMware Datastore

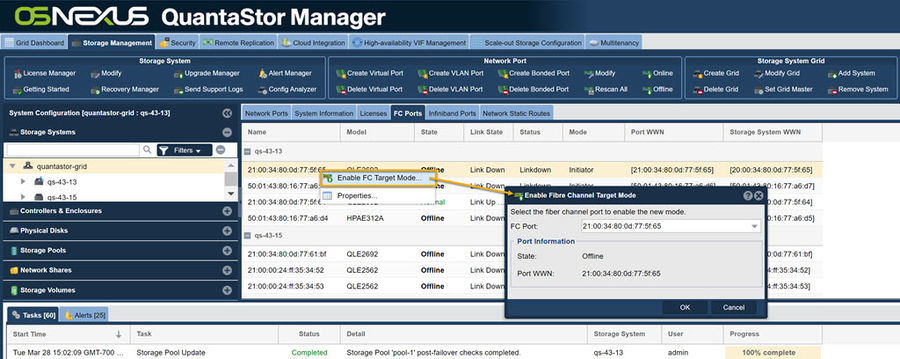

Using Fibre Channel

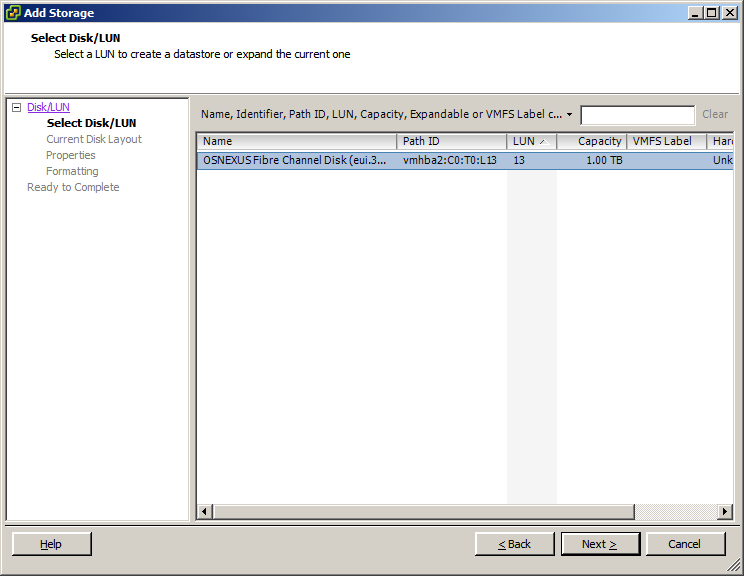

Setting up a datastore in VMware using fibre channel takes just a few simple steps.

- First verify that the fibre channel ports are enabled from within QuantaStor. You can enable them by right clicking on the port and selecting 'Enable FC Target Mode...'.

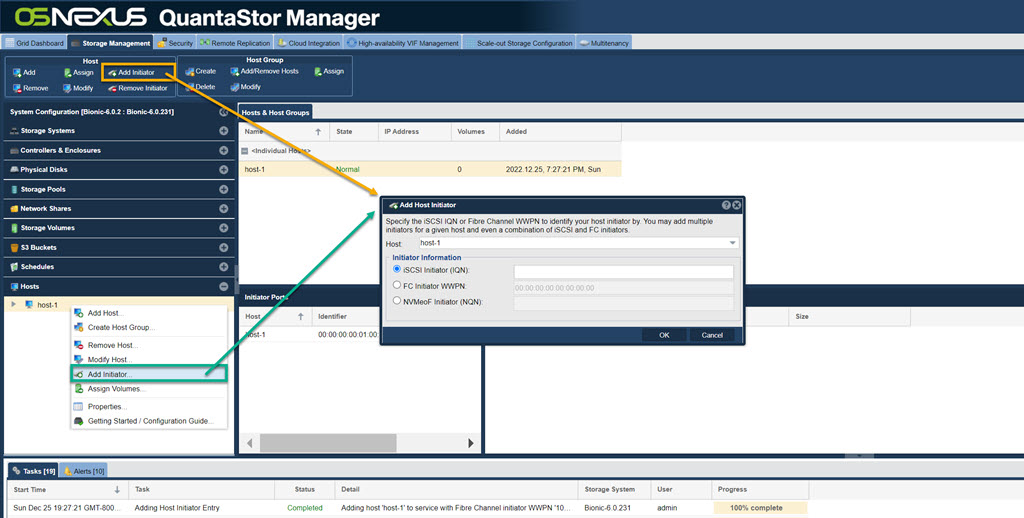

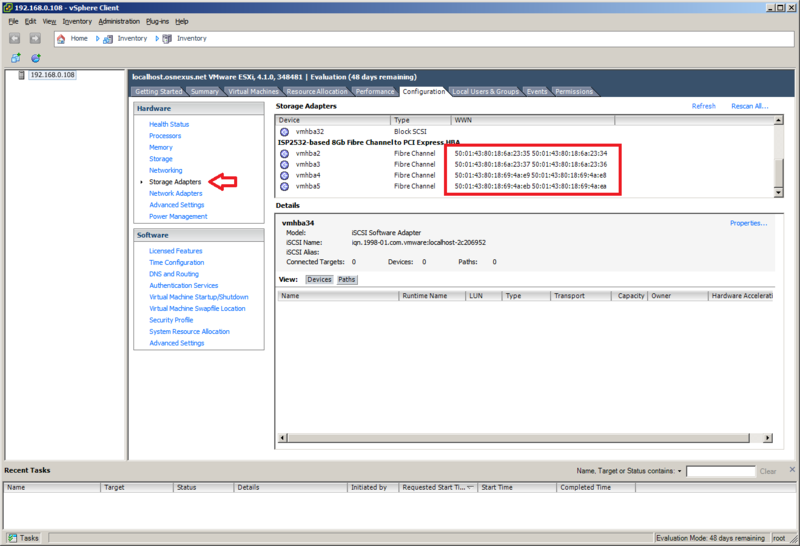

- Create a host entry within QuantaStor, and add all of the WWNs for the fibre channel adapters. To add more than one initiator to the QuantaStor host entry, right click on the host and select 'Add Initiator'. The WNNs can be found in the 'Configuration' tab, under the 'Storage Adapters' section.

- Now add the desired storage volume to the host you just created. This storage volume will be the Datastore that is within VMware.

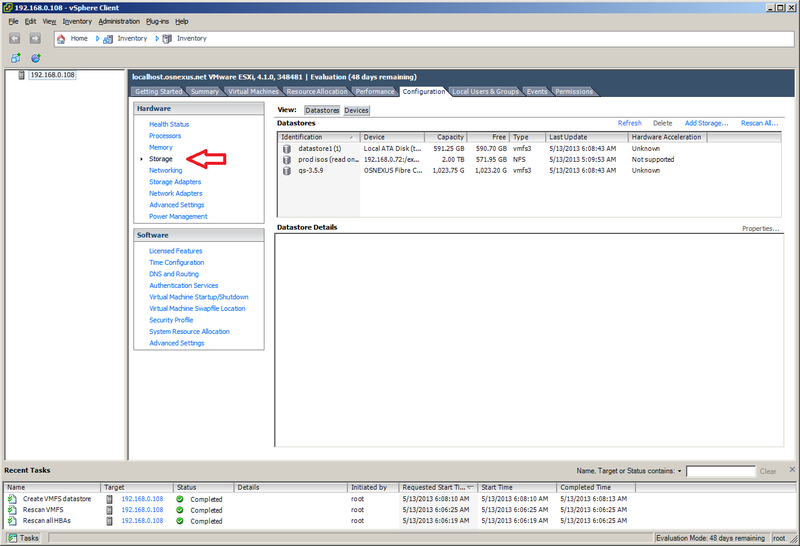

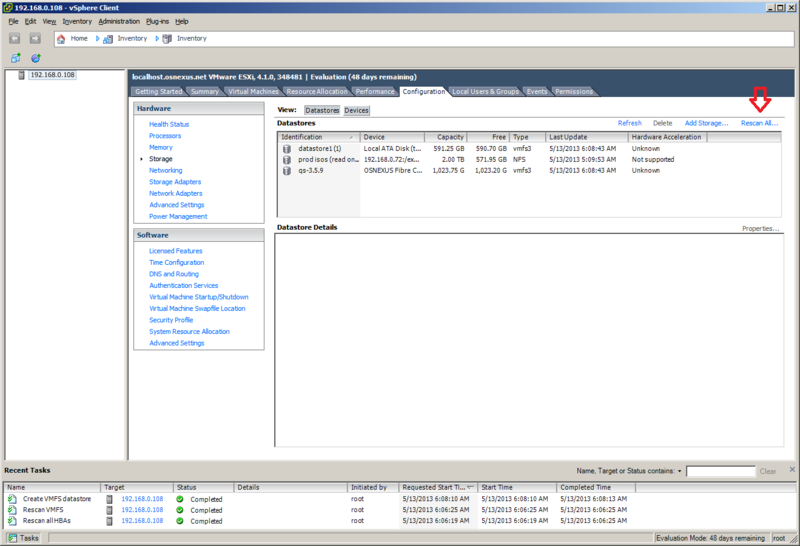

- Next, navigate to the 'Storage' section of the 'Configuration' tab. From within here we will want to select 'Rescan All...'. In the rescan window that pops up, make sure that 'Scan for New Storage Devices' is selected and click 'OK'.

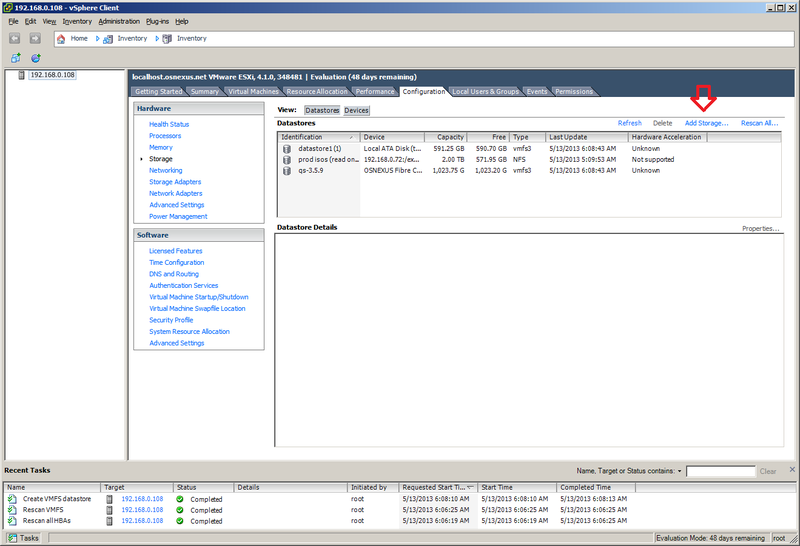

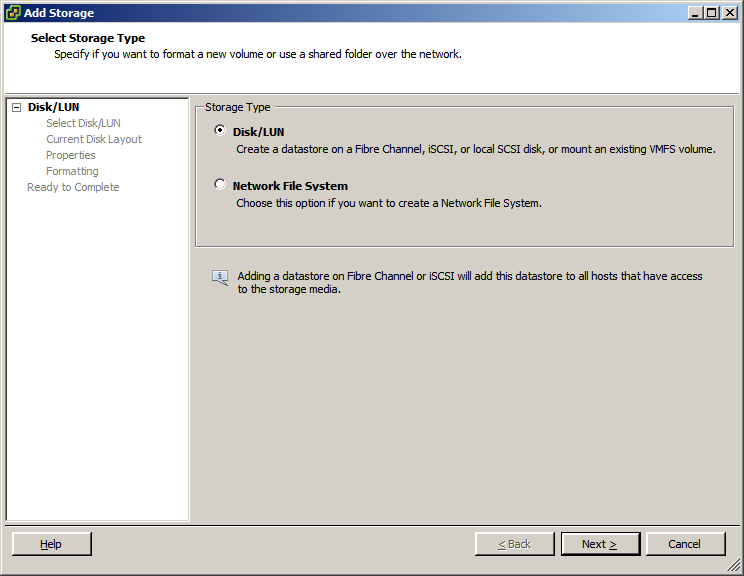

- After the scan is finished (you can see the progress of the task at the bottom of the screen), select the 'Add Storage...' option. Make sure that 'Disk/LUN' is selected and click next. If everything is configured correctly you should now see the storage volume that was assigned before. Select the storage volume and finish the wizard.

You should now be able to use the storage volume that was assigned from within QuantaStor as a datastore on the VMware server.

Using iSCSI

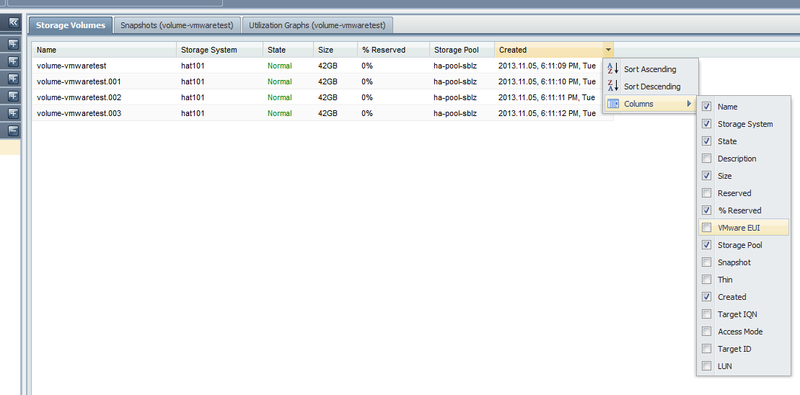

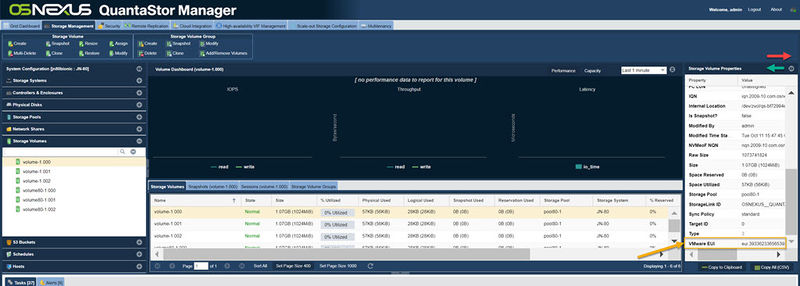

The VMware EUI unique identifier for any given volume can be found in the properties page for the Storage Volume in the QuantaStor web management interface. There is also a column you can activate as shown in this screenshot.

This screen shows a list of Storage Volumes and their associated VMware EUIs which you can use to correlate the Storage Volumes with your iSCSI device list in VMware vSphere.

Here is a video explaining the steps on how to setup a datastore using iSCSI. Creating a VMware vSphere iSCSI Datastore with QuantaStor Storage

Using NFS

Here is a video explaining the steps on how to setup a datastore using NFS. Creating a VMware datastore using an NFS share on QuantaStor Storage

Performance Tuning

Performance tuning is important and a number of factors contribute to performance and collectively they can provide huge improvements vs an untuned system.

Network Tuning - LACP vs Round-Robin Bonding

Network Tuning - Multiple Subnets

Network Tuning - Jumbo Frames / MTU 9000

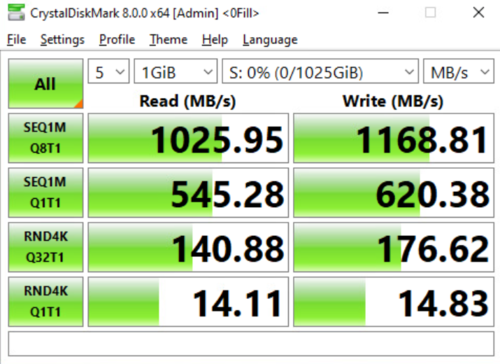

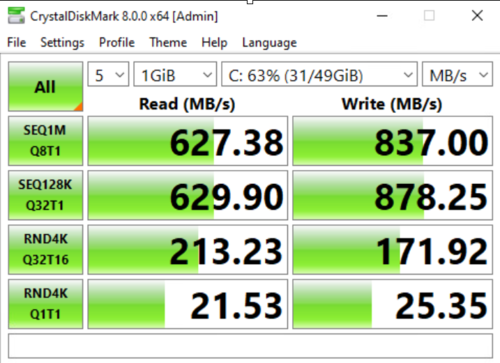

In ethernet transmissions the default maximum transmissible unit (MTU) size is 1.5KB (1500 bytes) which is not a lot of data. Jumbo Frames implies a MTU size of 9000 which means 6x more data is sent per frame (1500 x 6 = 9000) which minimizes the back and forth between the hosts and the system thereby reducing latency and improving throughput. When data is transmitted in small chunks (1500 MTU) it requires more transmit and acknowledgment operations to send the same amount of data event with the benefits of modern TCP Offload Engines (TOE). With this adjustment one can expect anywhere from a 15% to 40% improvement in overall throughput.

Before tuning, using 1500 MTU:

In the above results one can see about a 39% improvement in overall throughput but very little change in terms of IOPS as the IOPS test is inherently using a small 4K block size that is not going to see much improvement from the larger network frame size.

image credit: C. Selfridge

Special Frame Sizes

There are special cases where it can be helpful with specific models of network cards (NICs) to set the Jumbo Frames MTU to something other than 9000. If you're not seeing any improvement with a Jumbo Frame size of 9000 you might try some alternate sizes (eg 9128, 8500, 4800, 4200) that may be a better match for your switches and NIC but in general one should start with MTU 9000 which is the standard for Jumbo Frames.

Configuring QuantaStor Network Ports for Jumbo Frames

To set Jumbo Frames (MTU 9000) on a network port in QuantaStor, login to web user interface, then select the network port to be adjusted in the Storage Systems section under the system to be modified. Once a port is selected, right-lick then choose Modify Network Port. Within the Modify Network Port dialog press the "Jumbo Frames" button, then verify it is set to 9000 and press OK.

Verifying Jumbo Frames

In order for Jumbo Frames to take effect you must have the MTU 9000 setting applied on your network switch and on the host side network ports so that the larger MTU 9000 size packets are allowed end-to-end. To verify this one should ping to/from the QuantaStor system to verify the configuration. Here's an example of what you'll see if it's not configured correctly and the network is limited to the standard MTU of 1500.

root@qs-42-23:~# ping -M do -s 9000 10.0.42.24 PING 10.0.42.24 (10.0.42.24) 9000(9028) bytes of data. ping: local error: message too long, mtu=1500

Hardware Tuning - Firmware Updates

Sometimes old firmware can cause network issues so it is almost always a good idea to install the latest firmware on your motherboard BIOS and your network cards. Firmware is available from the website of the hardware manufacturer and generally speaking is fairly easy to apply but generally will also require a cold boot power cycle. Do not underestimate the importance of having current firmware, this is an important step in tuning any system.

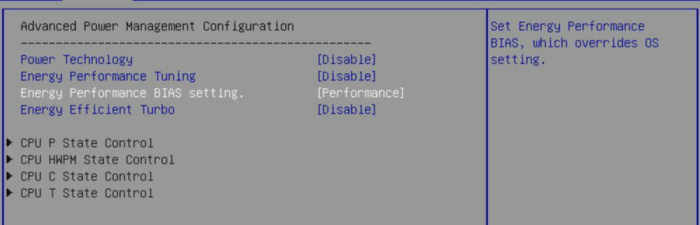

Hardware Tuning - BIOS Power Management Mode

Modern systems are designed with many power management features which is great for reducing costs and improving the environmental impact of datacenters but in the case of storage systems, it is very important that the system is tuned to use the Performance power management mode. There are no cases where your QuantaStor storage system should be set in any mode other than Performance. Other modes can lead to the system going offline unexpectedly via sleep, hibernate modes, or may significantly impact system performance.

image credit: C. Selfridge

VMware Tuning - RX/TX Frame Size

These configuration options are workload dependent and may not yield improvements.

Here one can see that vmnic2 has a max RX/TX size of 1024:

esxcli network nic ring preset get -n vmnic2 RX: 1024 RX Mini: 0 RX Jumbo: 0 TX: 1024

This may be increased to 2048 using the following commands for RX and TX respectively:

esxcli network nic ring current set -n vmnic2 -r 2048 esxcli network nic ring current set -n vmnic2 -t 2048

Then verify the configuration changes after the adjustments:

esxcli network nic ring preset get -n vmnic2 RX: 2048 RX Mini: 0 RX Jumbo: 0 TX: 2048

Repeat these steps for each of the vmnic ports.

VMware Tuning - iSCSI MaxIoSizeKB

Adjusting the MaxIoSizeKB from 128 to 512 may or may not be helpful, this adjustment is also workload dependent.

esxcli system settings advanced list -o /ISCSI/MaxIoSizeKB Path: /ISCSI/MaxIoSizeKB Type: integer Int Value: 128 Default Int Value: 128 Min Value: 128 Max Value: 512 String Value: Default String Value: Valid Characters: Description: Maximum Software iSCSI I/O size (in KB) (REQUIRES REBOOT!) esxcli system settings advanced set -o /ISCSI/MaxIoSizeKB -i 512 Path: /ISCSI/MaxIoSizeKB Type: integer Int Value: 512 Default Int Value: 128 Min Value: 128 Max Value: 512 String Value: Default String Value: Valid Characters: Description: Maximum Software iSCSI I/O size (in KB) (REQUIRES REBOOT!)

Credits

Special thank you to C. Selfridge for expert input and his shared expertise on tuning VMware that directly helped produce this article.