+ Getting Started Overview

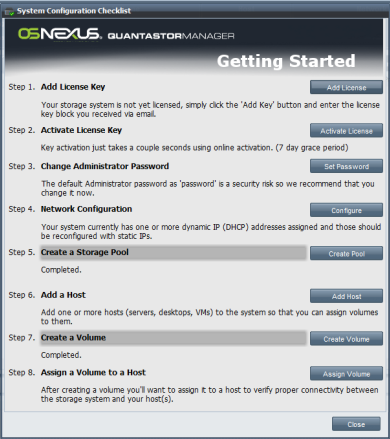

This guide assumes that you have already installed QuantaStor and have successfully logged into QuantaStor Manager. If you have not yet installed the QuantaStor SSP software on your server, please see the Installation Guide for more details. It is best to follow along with the Getting Started Guide as you configure the system using the Getting Started checklist which will appear when you first login to the QuantaStor Manager web interface. For a full guide to the web interface see the QuantaStor Administrators Guide.

The checklist can be access again at any time by pressing the 'System Checklist' button in the toolbar.

The default administrator user name for your storage system is simply 'admin' and this user name will automatically appear in the username field of the login screen. The password for the 'admin' account is initially just 'password' without the quotes. You will want to change this after you first login and it is one of the steps in the checklist.

Contents

License Key Management

Add License Key

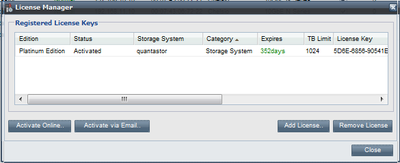

Once QuantaStor has been installed, the first step is to enter your license key block. Your license key block can be added using the License Manager dialog which is accessed by pressing the License Manager button in the toolbar.

It's also presented as the first step in the 'Getting Started' checklist.

The key block you received via email is contained within markers like so:

--------START KEY BLOCK-------- ---------END KEY BLOCK---------

Note that when you add the key using the 'Add License' dialog you can include or not include the START/END KEY BLOCK markers, it makes no difference.

Activate License Key

Once your key block has been entered you'll want to activate your key which can be done in just a few seconds using the online Activation dialog. If your storage system is not connected to the internet select the 'Activate via Email' dialog and send the information contained within to support@osnexus.com. You have a 7 day grace period for license activation so you can begin configuring and utilizing the system even though the system is not yet activated. That said, if you do not activate within the 7 days the storage system will no longer allow any additional configuration changes until an activation key is supplied.

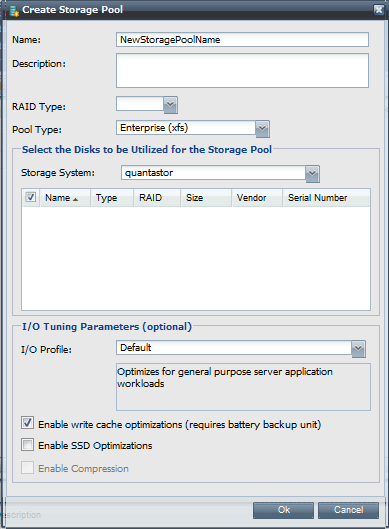

Creating Storage Pools

To create your first storage pool, select the disks that you want to use in the pool, choose the RAID layout for the pool, give the pool a name and press OK. That's all there is to it. You may have to wait a couple of minutes until the pool is %1 complete with it's synchronization before you can start to create storage volumes in the pool. If you choose RAID0 then the pool will be ready immediately but it won't be fault tolerant so you'll likely want to delete it and replace it with a RAID6 or RAID10 storage pool before you start writing important data to the storage system.

For more information on storage pools, RAID levels and storage pool options please see the Administrators Guide.

Storage pools combine or aggregate one or more physical disks (SATA, SAS, or SSD) into a single pool of storage from which storage volumes (iSCSI targets) can be created. Storage pools can be created using any of the following RAID types including RAID0, RAID1, RAID5, RAID6, or RAID10. Choosing the optimal RAID type depends on your the I/O access patters of your target application, number of disks you have, and the amount of fault-tolerance you require. (Note: Fault tolerance is just a way of saying how many disks can fail within a storage pool or - aka RAID group - before you lose data.) RAID1 & RAID5 allow you have one disk fail without it interrupting disk IO. When a disk fails you can remove it and you should add a spare disk with the 'degraded' storage pool as soon as possible to in order to restore it to a fault-tolerant status. RAID6 allows for up to two disk failures and will keep running, while RAID10 can allow for one disk failure per mirror pair. Finally, RAID0 is not fault tolerant at all but it is your only choice if you have only one disk and it can be useful in some scenarios where fault-tolerance is not required. Here's a breakdown of the various RAID types and their pros & cons.

- RAID0 layout is also called 'striping' and it writes data across all the disk drives in the storage pool in a round robin fashion. This has the effect of greatly boosting performance. The drawback of RAID0 is that it is not fault tolerant, meaning that if a single disk in the storage pool fails then all of your data in the storage pool is lost. As such RAID0 is not recommended except in special cases where the potential for data loss is non-issue.

- RAID1 is also called 'mirroring' because it achieves fault tolerance by writing the same data to two disk drives so that you always have two copies of the data. If one drive fails, the other has a complete copy and the storage pool continues to run. RAID1 and it's variant RAID10 are ideal for databases and other applications which do a lot of small write I/O operations.

- RAID5 achieves fault tolerance via what's called a parity calculation where one of the drives contains an XOR calculation of the bits on the other drives. For example, if you have 4 disk drives and you create a RAID5 storage pool, 3 of the disks will store data, and the last disk will contain parity information. This parity information on the 4th drive can be used to recover from any data disk failure. In the event that the parity drive fails, it can be replaced and reconstructed using the data disks. RAID5 (and RAID6) are especially well suited for audio/video streaming, archival, and other applications which do a heavy sequential write I/O operations (such as reading/writing large files) and are not as well suited for database applications which do heavy amounts of small random write I/O operations or with large file-systems containing lots of small files with a heavy write load.

- RAID6 improves upon RAID5 in that it can handle two drive failures but it requires that you have two disk drives dedicated to parity information. For example, if you have a RAID6 storage pool comprised of 5 disks then 3 disks will contain data, and 2 disks will contain parity information. In this example, if the disks are all 1TB disks then you will have 3TB of usable disk space for the creation of volumes. So there's some sacrifice of usable storage space to gain the additional fault tolerance. If you have the disks, we always recommend using RAID6 over RAID5. This is because all hard drives eventually fail and when one fails in a RAID5 storage pool your data is left vulnerable until a spare disk is utilized to recover your storage pool back to a fault tolerant status. With RAID6 your storage pool is still fault tolerant after the first drive failure. (Note: Fault-tolerant storage pools (RAID1,5,6,10) that have suffered a single disk drive failure are called degraded because they're still operational but they require a spare disk to recover back to a fully fault-tolerant status.)

- RAID10 is similar to RAID1 in that it utilizes mirroring, but RAID10 also does striping over the mirrors. This gives you the fault tolerance of RAID1 combined with the performance of RAID10. The drawback is that half the disks are used for fault-tolerance so if you have 8 1TB disks utilized to make a RAID10 storage pool, you will have 4TB of usable space for creation of volumes. RAID10 will perform very well with both small random IO operations as well as sequential operations and it is highly fault tolerant as multiple disks can fail as long as they're not from the same mirror-pairing. If you have the disks and you have a mission critical application we highly recommend that you choose the RAID10 layout for your storage pool.

In many cases it is useful to create more than one storage pool so that you have both basic low cost fault-tolerant storage available from perhaps a RAID5 storage pool, as well as a highly fault-tolerant RAID10 or RAID6 storage pool available for mission critical applications.

Once you have created a storage pool it will take some time to 'rebuild'. Once the 'rebuild' process has reached 1% you will see the storage pool appear in QuantaStor Manager and you can begin to create new storage volumes.

WARNING: Although you can begin using the pool at 1% rebuild completion, your storage pool is not fault-tolerant until the rebuild process has completed.

Disk Drive Selection

Storage pools are created using entire disk drives. That is, no two storage pools can can share a physical disk between them. Also, storage pools that use striping such as RAID5, RAID6, and RAID0 must use disks of equal size or the lowest common denominator of disk sizes will be utilized. For example, if you have 4 disks of different sizes, 1 x 500GB, 2 x 640GB, and 1 x 1TB and you create a RAID5 storage pool out of them then the storage pool will effectively comprised of 4 x 500GB disks because the extra space in the 640GB and 1TB drives will not be utilized. With RAID5 one of those disks would contain the parity data leaving you with 1.5TB of usable space in the pool.

In short, plan ahead and purchase harddrives of the same size from the same manufacturer. You can use SAS, SATA and/or SSD drives in your QuantaStor storage system, but it is not a good idea to mix them within a single storage pool as they all have different IO characteristics.

Data Compression

Storage pools support data compression and this not only saves you disk space it improves performance. It may seem surprising that read and write performance would improve with compression but the fact is that today's processors can compress/decompress data in much much less time than it takes for a hard disk head to seek to the correct location on the disk. What this means is that when it does get to the right location to read a given block of data, it will need to read fewer blocks to get a large amount of data thereby making much less work for the hard drive to serve up data for a given request. This is especially effective with read operations but also improves write performance. To use data compression with your storage pool, just leave the box checked when you bring up the 'Create Storage Pool' dialog as it is the default to have it enabled.

SSD Optimizations

SSD drives to not have rotational latency issues that you find with traditional hard disk drives. As such the intelligent queuing operations that happen at various IO layers to improve read/write performance based on the location of the head and proximity of data blocks does no good. In fact they hurt performance as in many cases the disk drivers will wait for additional requests or blocks to arrive in an attempt to queue and optimize the IO operations. The SSD drives also use a technology called TRIM to free up blocks that are no longer used. Without TRIM SSD drives have their write performance progressively degrade as the layout of the blocks become progressively more and more fragemented. Because SSD drives and hard drives in general are oblivious to the file-system type and layout of the data stored within them, they do not know when a given block has been deleted and can be freed up for reuse hence the need for something at the Operating System level to communicate this information. This is called "garbage collection" and modern operating systems & file systems like the one used with QuantaStor use the TRIM technology to communicate to SSD devices information about which blocks can be garbage collected thereby improving the performance of the device and your iSCSI storage volumes residing within.

Creating Storage Volumes

After you have created one or more storage pools you can begin creating storage volumes in them. Storage volumes are the iSCSI disks that you present to your hosts. Sometimes they're called LUNs or virtual disks, but storage volume is the generally accepted term used by the broader storage industry. Storage volume creation is very straight forward, just click the 'Create Storage Volume' button in the tool bar and when the dialog appears give your new volume a name, select the size of the volume you want to create using the slider bar, and press OK. There are some custom options such as thin-provisioning, and CHAP authentication that you can configure when creating a volume, for now just use the defaults but we do recommend reading more about these features in the Administrator's Guide.

Adding Hosts

The host object represents a server, desktop, virtual machine or any other entity that you want to present storage to. QuantStor makes sure that each host is only allowed to see the storage volumes that you have assigned to them by filtering what the host sees when it does an iSCSI login to the storage system. The industry term for this is called "LUN Masking" but in QuantaStor we refer to it more simply as just storage assignment. To add a host to the system just click the 'Add Host' button and you will be presented with a dialog where you can enter a name for your host, and an IP address and/or IQN. We highly recommend that you always identify your hosts by IQN as it is easier to manage your hosts that way. Once you've entered a host name and it's IQN press OK to have the host created. Now you're ready to assign the volume you created in the previous step to your host.

Assigning Storage Volumes to Hosts

There are two ways you can approach assigning storage to a host. If you select the host in the tree and choose 'Assign Storage' from the pop-up menu you'll be presented with a list of all the volumes. From this list simply select those volumes that you want assigned to the selected host. The second way is by selecting the volume in the Storage Volume tree on the left, and then choose 'Assign Storage' from the pop-up menu. This allows you to assign storage from the volume perspective and here you can select multiple hosts that should all have access to the selected storage volume.

Note: A given volume can be assigned to more than one host at the same time, but keep in mind that you need to have software or a file-system than can handle the concurrent access such as a cluster file system or similar cluster aware application if you're going to use the device from two or more hosts simultaneously.

Once you've made your host selections and volume selections press OK and you will see the volume appear in the Host tree in the left-hand side of the screen under the host(s) which now have access to the volume.