Scale-out Block Setup (ceph): Difference between revisions

mNo edit summary |

|||

| Line 91: | Line 91: | ||

{{Template:CephManagementOperations}} | {{Template:CephManagementOperations}} | ||

Revision as of 17:17, 23 March 2016

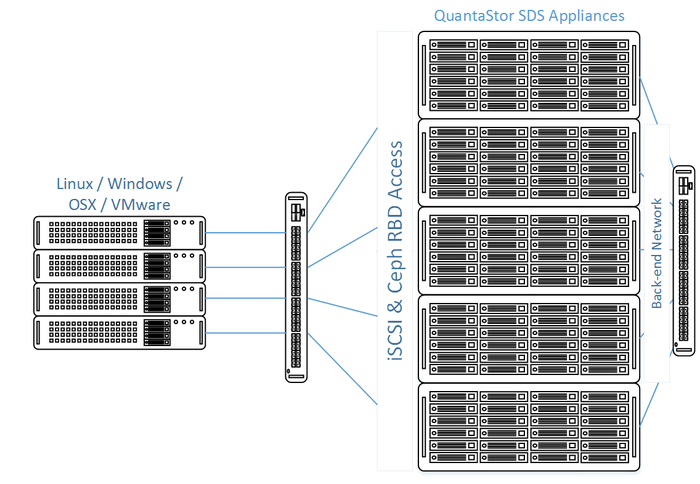

Welcome to the Administrator's Guide to using QuantaStor's Scale-out SAN solution using Ceph. QuantaStor Scale-out SAN is intended to provide a highly scalable Storage Area Network solution where the QuantaStor appliance is used to provision SAN block storage to iSCSI or Fiber-Channel clients sourced from a Ceph storage backend.

This guide will provide details regarding Ceph technology as well as instructions on how to setup and configure a Scale-out SAN installation using QuantaStor.

Introduction to Scale-out SAN (iSCSI/FC) Block Storage using Ceph

QuantaStor with Ceph is a highly-available and elastic SDS platform that enables scaling object storage environments from a small 3x appliance configuration to hyper-scale. Within a QuantaStor grid up to 20x individual Ceph clusters can be managed through a single pane of glass by logging into any appliance in the grid with any major web browser. The Web UI's powerful configuration, monitoring and management features make it easy to setup large complex configurations with ease without ever using a console or command line tools. The following guide covers how to setup object storage, monitor, and maintain it.

This section will introduce the various component terminology and concepts regarding Ceph to enable confident creation and administration of a Scale-out SAN solution using QuantaStor and Ceph.

Ceph Terminology & Concepts

This section will introduce Ceph terms and concepts to familiarize oneself with to become more proficient with Ceph Cluster administration in QuantaStor. This discussion will address general concepts surrounding Ceph. To implement Ceph in QuantaStor it is recommended to see Getting Started to quickly implement Ceph. Getting Started can be found at Storage Management --> Storage System --> Storage System --> Getting Started (toolbar).

Getting Started in Administration guide.

Further Information: Introduction to Ceph.

Ceph Cluster

A Ceph Cluster is a group of three or more systems that have been clustered together using the Ceph storage technology. Ceph requires a minimum of three nodes to create a cluster so that quorum may be established across the Ceph Monitors. Wikipedia Quorum (distributed computing).

In QuantaStor based Ceph configurations, QuantaStor systems must first be combined into a Storage Grid. After the Storage Grid is formed one more more Ceph Clusters may be created within the Storage Grid. In the example above the Storage Grid is comprised of a single Ceph Cluster. When the Ceph Cluster is initially created QuantaStor automatically deploys 3x Ceph Monitors within the new Ceph Cluster.

Ceph Monitor

The Ceph Monitors form a Paxos The Part-Time Parliament cluster for the management of cluster membership, configuration information, and state. Paxos is an algorithm (developed by Leslie Lamport in the late 80s) which uses a three-phase consensus protocol to ensure that cluster updates can be done in a fault-tolerant timely fashion even in the event of a node outage or node that is acting improperly. Ceph uses the algorithm so that the membership, configuration and state information is updated safely across the cluster in an efficient manner. Since the algorithm requires a quorum of nodes to agree on any given change an odd number of systems (three or more) are required for any given Ceph cluster deployment.

During initial Ceph cluster creation, QuantaStor will configure the first three systems to have active Ceph Monitor services. Configurations with more than 16 nodes should add two additional monitors. This can be done through the QuantaStor web user interface in the Scale-out Storage Configuration section.

In a Ceph cluster with 3x monitors a minimum of 2x monitors must be online at all times. If only one monitor (or none) are running then storage access will is automatically disabled until quorum among monitors may be reestablished. In larger clusters with 5x monitors then 3x monitors must be online at all times to maintain quorum and storage accessibility.

Ceph Object Storage Daemon / OSD

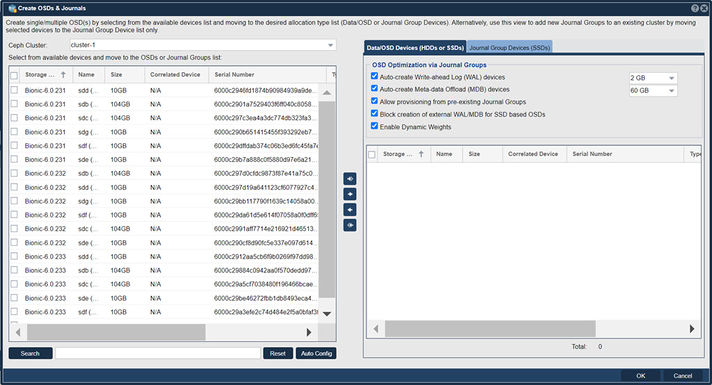

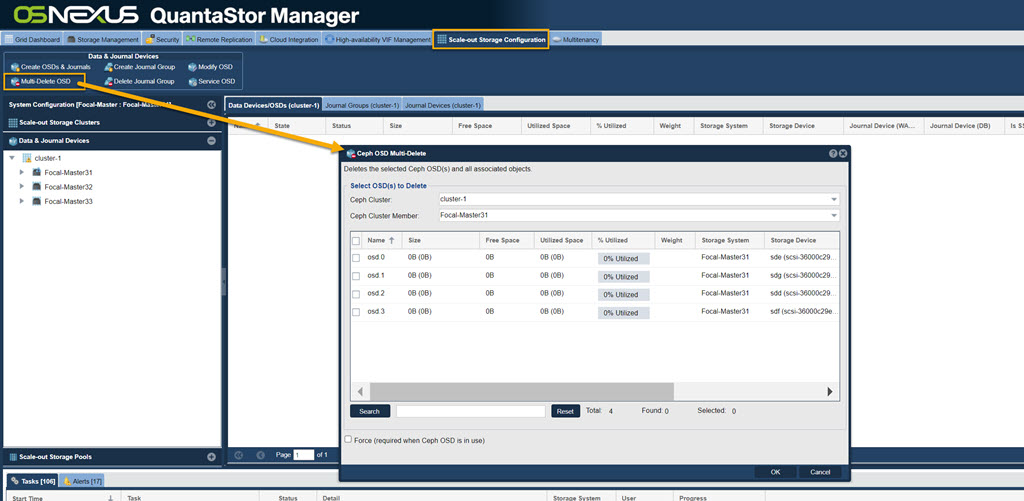

Navigation: Scale-out Storage Configuration --> Data & Journal Devices --> Data & Journal Devices --> Create OSDs & Journals (toolbar)

The Ceph Object Storage Daemon, known as the OSD, is a daemon process that reads and writes data and generally maps 1-to-1 to a HDD or a SSD device. OSD devices may be used by multiple Storage Pools so after the OSDs are added one may allocate pools for file, block, and object storage which all use the available OSDs in the cluster to store their data.

QuantaStor Scale-out SAN with Ceph deployments must have at least 3x OSDs per system, making 9x OSDs total the minimum number OSDs. QuantaStor 5 and newer versions use the BlueStore OSD storage back-end. For additional BlueStore information see, New in Luminous: BlueStore.

N.B., for ease of use there is an Auto Config button that will optimize selection of available devices.

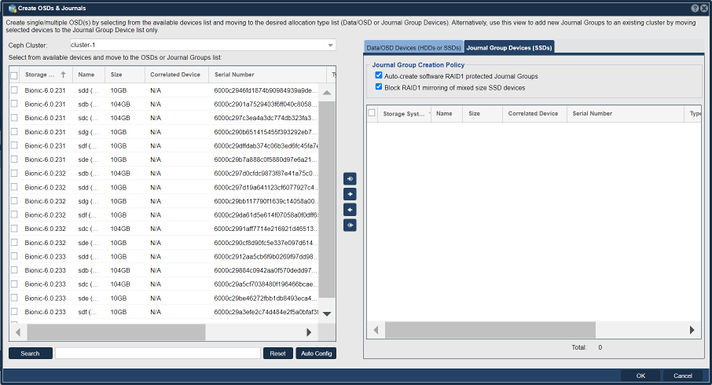

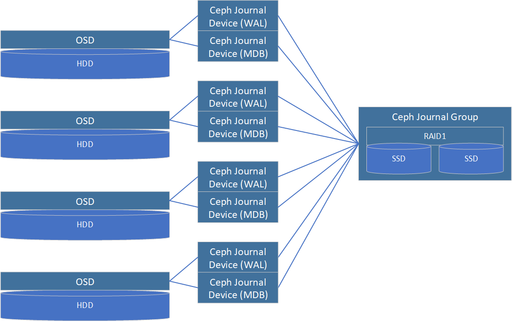

Journal Groups

Journal Groups are used to boost the performance of OSDs. Each Journal Group can provide a performance boost for 5x to 30x OSD devices depending on the speed of the storage media used to create a given Journal Group. Journal Groups are typically created using a pair of SSDs which QuantaStor combines into software RAID1 mirror. Once created Journal Groups provide high performance, low latency, storage from which Ceph Journal Devices may be provisioned and attached to new OSDs to boost performance. Because Journal Groups must sustain high write loads over a period of years only datacenter (DC) grade / enterprise grade flash media should be used to create them. Journal Groups can be created using all types of flash storage media including NVMe, PMEM, SATA SSD, or SAS SSD.

Journal Devices are provisioned from Journal Groups. Journal Devices come in two types, Write-Ahead-Log (WAL) devices and Meta-data DB (MDB) devices. QuantaStor automatically provisions WAL devices to be 2GB in size and MDB devices can be 3GB, 30GB (default), or 300GB in size.

Journal Groups are not required but are highly recommended when creating HDD based OSDs. With SSD based OSDs it is not recommended to assign them external WAL and MDB devices from Journal Groups. Rather the MDB and WAL storage for SSDs will be allocated out of a small portion of the underlying OSD data device. With platter/HDD based OSDs we highly recommend the creation of Journal Groups so that each OSD can have both an external WAL device and a external MDB device.

NVMe and 3D XPoint flash storage media are the best storage types for creating Journal Groups due to their high throughput and IOPS performance. We recommend allocating 100MB/sec and 32GB of capacity for each HDD based OSD. For example, a system with 60x HDD based OSDs would require 60x100MB/sec or 6000MB/sec of Journal Group throughput. If NVMe devices are selected that can do 2000MB/sec then three Journal Groups will be required and a total of 6x NVMe SSDs (3x RAID1 Journal Groups). Capacity wise 60x HDDs will require 60x32GB of storage for all the WAL and MDB devices to be created or what amounts to 1.92TB of provisionable Journal Group capacity. One possible design to meet both the performance and the capacity requirements would be to make the 3x Journal Groups using a total of 6x 800GB NVMe devices with a 3x DWPD endurance.

Write-Ahead Log (WAL) Journal Devices

WAL Journal Devices are provisioned from Journal Groups and are then attached to new OSDs when they are created. WAL devices accelerate write performance. When a write request is received by an OSD it is able to write the data to low-latency stable flash media very quickly to complete the write. Data can then be written lazily to the HDD as time allows without risk of losing data due to a sudden system power outage.

Meta-data Database (MDB) Journal Devices

MDB Journal Devices effectively boost both read and write performance as they contain all the Bluestore filesystem metadata. Rather than having to write small blocks of metadata to HDDs which have low IOPS performance and external MDB device on flash media can sustain high IOPS loads and in turn greatly boosts performance.

Hardware RAID

Although Hardware RAID may be used in Ceph Clusters as an underlying storage abstraction for OSDs it is generally not recommended. It does have applications in very large Ceph clusters (ie. 1000s of OSDs) and with clusters comprised of servers with limited RAM and CPU core count. Roughly speaking each OSD requires approximately 2GB of RAM and a 1GHz fractional CPU core. A server with 60x HDD based OSDs will require a large dual-processor configuration and 192GB of RAM. By combining disks using HW RAID these requirements are reduced 5:1. QuantaStor does have integrated hardware RAID management and monitoring to manage configurations that use hardware RAID. But again, we do not recommend the use of HW RAID except in specialized configurations and in hyper-scale configurations.

Ceph Placement Groups (PGs)

Ceph Pools do not write data directly to OSDs, rather there is an abstraction layer between each Ceph Pool and the OSDs comprised of Placement Groups, PGs. Each PG can be thought of as a logical stripe across a group of OSDs. Ceph Pools created with a replica=2 storage layout will have PGs that each reference 2x OSDs. Similarly a Ceph Pool with an erasure-coding layout of K8+2M would have PGs that each span 10x OSDs. When creating new File, Block, or Object Storage Pools with QuantaStor you have control over the number of PGs to be created using the Scaling Factor option. If a given Ceph Cluster is to be used for a single type of storage such as File or Object then one would set the Scaling Factor to 100%. If it is expected that a given Ceph Cluster will be used for 30% Object storage and 70% File storage then those Storage Pools should be allocated with those Scaling Factors respectively. Your choice for the Scaling Factor for any given pool should be a best guess. The PG count can be adjusted later to provide better optimization of storage distribution and balancing across the OSDs in the future if required.

Ceph CRUSH Maps and Resource Domains

Ceph supports the ability to organize placement groups, which provide data mirroring across OSDs, so that high-availability and fault-tolerance can be maintained even in the event of a rack or site outage. By defining failure-domains, such as a Rack of systems, a Site, or Building, a map can be created so that Placement Groups are intelligently laid out to ensure high-availability despite the outage of one or more failure-domains, depending on the level of redundancy.

This intelligent map is called the Ceph CRUSH map (Controlled Replication Under Scalable Hashing), standing for Controlled, Scalable, Decentralized Placement of Replicated Data, and it defines how to mirror data in the Ceph cluster to ensure optimal performance and availability.

Creating CRUSH maps manually can be a complex process, so QuantaStor creates and configures CRUSH maps automatically, saving a large degree of administrative overhead. To facilitate automatic CRUSH map management, detail regarding where each QuantaStor system is deployed must be provided. This is done by creating a tree of Resource Domains via the WebUI (or via CLI/REST APIs) to organize the systems in a given QuantaStor Grid into Racks, Sites, and Buildings. QuantaStor uses this information to automatically generate an optimal CRUSH map when pools are provisioned, ensuring optimal performance and high-availability.

Custom CRUSH map changes can still be made to adjust the map after the pool(s) are created and OSNEXUS provides consulting services to meet special requirements. Resource Domains are a QuantaStor construct so you will not find mention of them in general Ceph documentation, but they map closely to the CRUSH bucket hierarchy.

For additional information see, CRUSH MAPS

Setting up Scale-out SAN with Ceph

This section will deal step through the process of setting up and deploying Scale-out SAN using Ceph on QuantaStor. Before getting starting with setting up and deploying Scale-out SAN using Ceph, it is highly recommended that you familiarize yourself with the concepts in the above sections.

Requirements Before Getting Started

- 3x QuantaStor servers minimum

- Dual Intel or AMD CPUs

- 96 GB RAM

- 2x SSDs in hardware RAID1 (boot/system)

- 4x or more SSDs/HDDs for OSD data devices per server

- 2x SSDs per server for Journal Groups (only required for HDD based OSD systems)

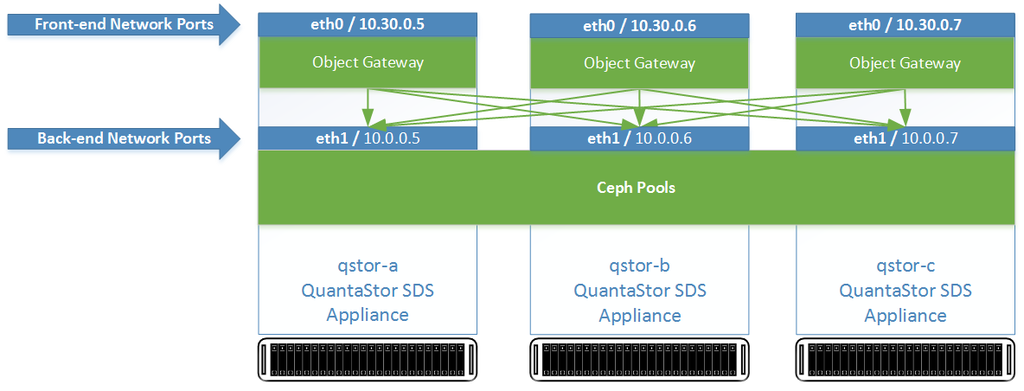

Front-end / Back-end Network Configuration

Network configuration for Ceph Clusters may use separate front-end and back-end networks. Depending on the protocols to be used it can sometimes be easier and simpler to configure a given Ceph Cluster with a single subnet for both the front-end/public network and the back-end/cluster network communication. With 100GbE and faster networks it is more common to deploy a single network configured for all cluster traffic.

System Installation and Initialization

The minimum node count for a Scale-out SAN configuration using Ceph is 3 Systems, with a maximum node count of 64. Install QuantaStor on each System and following the steps below to complete the initial setup. For details, please see the QuantaStor Installation Guide, which includes details steps and troubleshooting.

- Login to the QuantaStor web management interface on each system

- Add your license keys, one unique key for each system

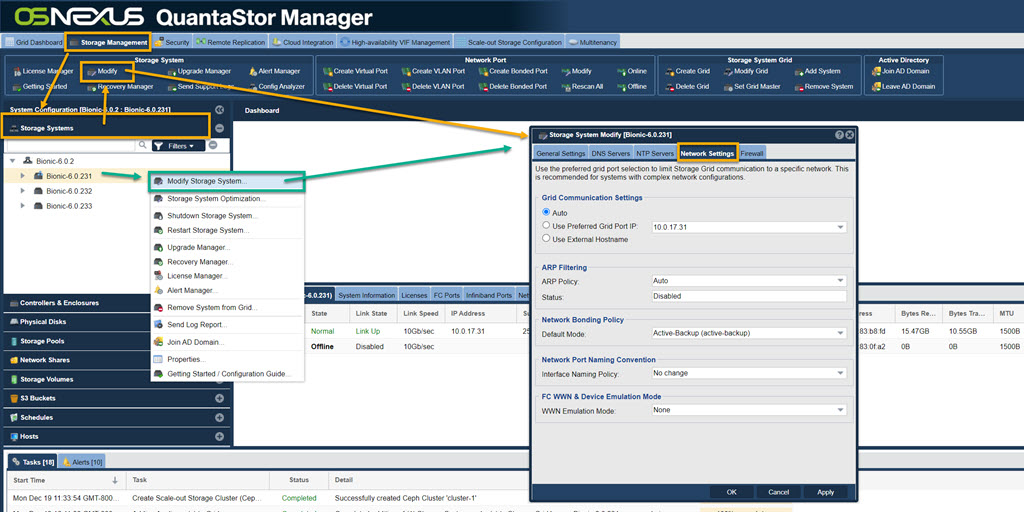

- Right-click on the storage system, choose 'Modify Storage System..' and set the DNS IP address (eg: 8.8.8.8), and the NTP server IP address (important!)

Configure Front-end and Back-end Network Ports

Update the configuration of each network port using the Modify Network Port dialog to put it on either the front-end network or the back-end network. The port names should be consistently configured across all appliance nodes such that all ethN ports are assigned IPs on the front-end or the back-end network but not a mix of both.

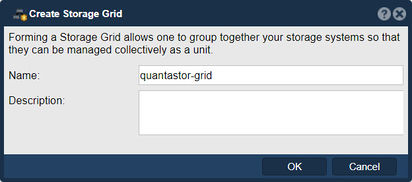

Create QuantaStor Grid

A QuantaStor Grid enables the administration and management of multiple Systems as a unit (single pane of glass). By joining Systems together in a Grid, the WebUI will display and allow access to resources and functionality on all Systems that are members of the Grid. Grid membership is also a prerequisite for the High-Availability and Scale-out configurations offered by QuantaStor.

Networking between the nodes must be configured before proceeding with Grid setup. Once network is configured and confirmed on a per-System basis, proceed to creating the QuantaStor grid using the following instructions.

Create the Grid on the First Node

The node where the Grid is created initially will be elected as the initial primary/master node for the grid. The primary node has an additional role in that it acts as a conduit for intercommunication of grid state updates across all nodes in the grid. This additional role has minimal CPU and memory impact.

- Select the Storage Management tab and click the Create Grid button under the Storage System Grid section, or right-click on the System under Storage System and select Create Management Grid...

- Name: The Grid name can be set to anything.

After pressing OK, QuantaStor will reconfigure the node to create a single-node Grid.

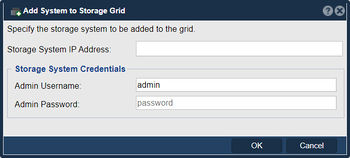

Add Remaining Nodes to the Grid

Now that the Grid is created and the Primary node is a member, proceed to add all the additional systems. Note that this should be done from the Primary node's WebUI.

- Click on the Add System button in the Storage Management ribbon bar or right click on the Grid and select Add System to Grid...

- IP or hostname for the node to add

- Username for an Administrative user (default is admin)

- Password to authenticate

Repeat this process for each node to be added to the QuantaStor Grid. The Grid and member Systems can be managed by connecting to the WebUI of any of the members. It is not necessary to connect to the master node.

Preferred Grid IP

System to System communication typically works itself out automatically but it is recommended that you specify the network to be used for system inter-node communication for management operations. This is done by selecting the "Use Preferred Grid Port IP" from the in the "Network Settings" tab of the Storage System Modify dialog by right-clicking on each system in the grid and select 'Modify Storage System...'.

Note regarding User Access Security

Be aware that the management user accounts across the systems will be merged as part of joining the Grid. This includes the admin user account. In the event that there are duplicate user accounts, the user accounts in the currently elected primary/master node takes precedence.

Create Storage Targets / Hardware RAID Configuration

As noted in the Getting Started section, creating RAID5 pools using 5 disks each to use as your XFS Storage Pool devices is highly recommended for performance and redundancy reasons. Ultimately any form of block storage can be used to create the XFS Storage Pools necessary for use with Ceph, but any other configuration should be reserved only for test environments.

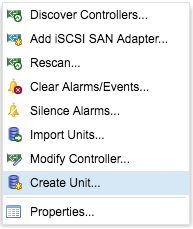

- Expand the Hardware Enclosures & Controllers drawer under the Storage Management window. Right click on the Controller and select Create Unit.

Proceed to create hardware RAID5 unites using 5 disks per RAID unit (4 data disks + 1 Parity). Repeat this on each node of the Grid until all devices have been assigned to RAID units and presented as LUNs. Click the Scan button in the Physical Disks section of the ribbon bar to discover the new devices.

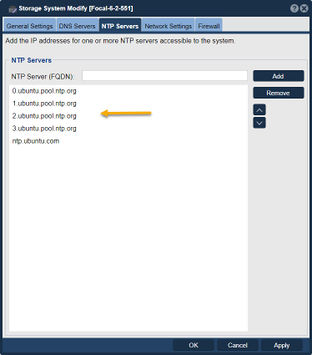

Network Time Protocol (NTP) Configuration

NTP is a system to make sure that the clock on computers is accurate. It is particularly important in Ceph cluster deployments that the clock be accurate. When the clocks on two or more systems are not synchronized it is called clock skew. Clock skew can happen for a few different reasons, most commonly:

- NTP server setting is not configured on one or more systems (use the Modify Storage System dialog to configure)

- NTP server is not accessible due to firewall configure issues

- No secondary NTP server is specified so the outage of the primary is leading to skew issues

- NTP servers are offline

- Network configuration problem is blocking access to the NTP servers

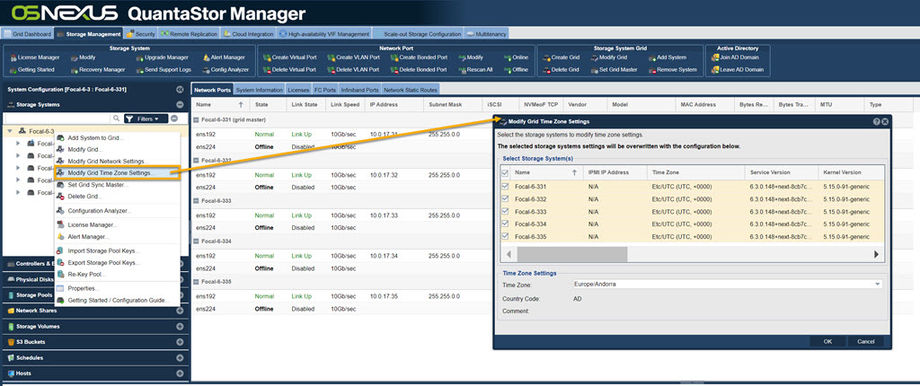

Ensure NTP servers are configured for each System by right clicking on the System under the Storage System drawer and select Modify Storage System... and examine that you have valid NTP servers configured.

- Note that QuantaStor retains the Ubuntu default NTP servers, but this may need to be adjusted based on accessibility restrictions of the Ceph cluster's network.

Alternatively, you can set all systems on a grid to the same time zone. Right click on the grid and select Modify Grid Time Zone Settings. This will bring up a menu with all of the systems pre-selected, and you can change the time zone. You can also set them to all have the same Domain Suffix. This is also where you can set the NTP Server for the entire grid.

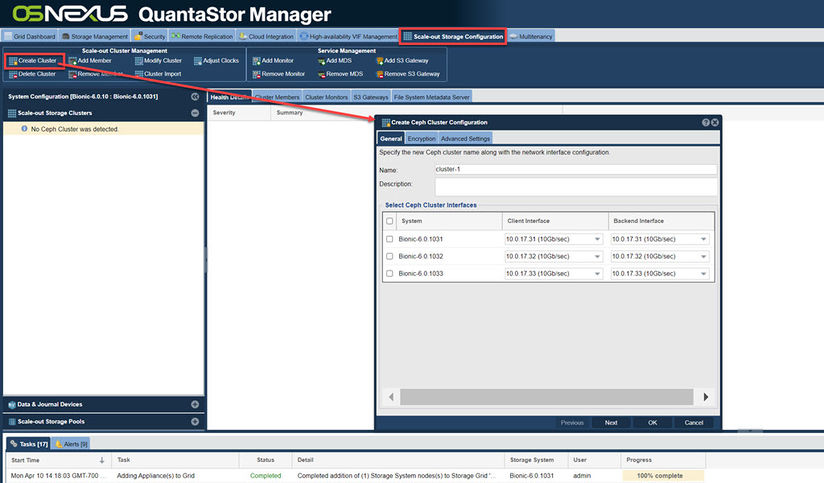

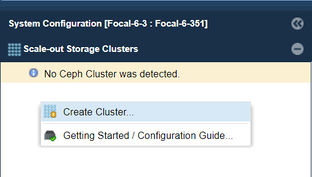

Create the Ceph Cluster

Navigation: Scale-out Storage Configuration --> Scale-out Storage Clusters --> Scale-out Cluster Management --> Create Cluster (toolbar)

The QuantaStor Grid and a Ceph Cluster are separate constructs. The Grid must be setup first and can consist of heterogeneous mix of up to 64 systems which can span sites. Within the grid one can create up to 20x Ceph Clusters where each cluster must have at least 3x systems. A given systems cannot be a member of more than one grid or cluster at the same time. Typically a grid will consist of just one or two Ceph clusters and most often the cluster is built using systems that are all within one site but a cluster can span multiple sites as long as the network connectivity is stable and the latency is relatively low. For high latency links the preferred method is to setup a asynchronous remote-replication link to transfer data (delta changes) from a primary to a secondary site based on a schedule.

- Navigating to Scale-out Block & Object Storage in the top menu, then click on Create Cluster in the ribbon bar

- Alternatively you can right-click in the empty space below the Ceph Cluster dialog and select Create Ceph Cluster

Make sure that all of the network ports selected for the front-end network are the ports that will be used for client access (S3, iSCSI/RBD) and that the back-end ports are on a private network.

Note Before Creating OSDs and Ceph Journals

The Object Storage Daemons (OSDs) store data, while the Journals accelerate write performance as all writes flow to the journal and are complete from the client perspective once the journal write is complete. A quick review of OSD and Journal requirements in a QuantaStor/Ceph Scale-out Block and Object configuration:

- Each system should have 5x HDDs (or SSDs) for data devices to satisfy minimum Placement Group redundancy requirements

- Each system should have 1x or more journal devices (NVMe/Optane preferred)

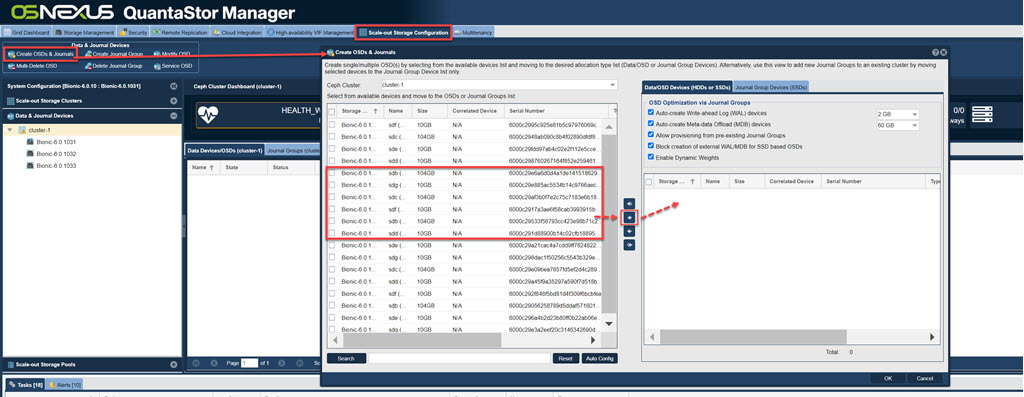

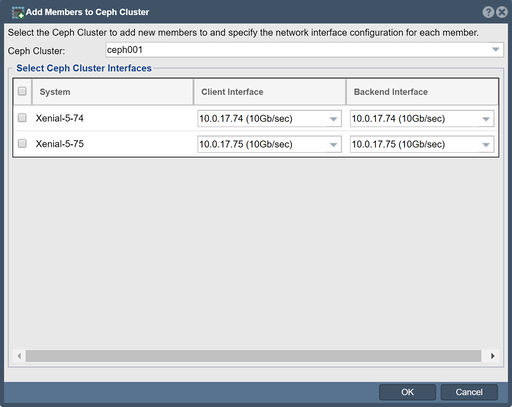

Create the OSDs and Journal Devices

Ceph OSDs and Journal Devices can be created by navigating to the Data & Journal Devices menu. From here you can either right click in the space and press Create OSDs & Journals or you can access it via the Create OSDs & Journals option in the ribbon bar. A dialogue box titled Create OSDs & Journals will open:

- Mark the devices intended for use as Journals or OSDs from each system

- Click the -> arrow in the middle section to set the devices as Journal or OSD devices

- If Journal devices already exist in the Ceph Cluster the Use available Ceph Journal partitions checkbox can be marked

- Auto Config button can do all the work for you.

Once this form is submitted, QuantaStor will begin the process by creating an Storage Pool for each of the devices added as an OSD in the dialogue. This process takes time. Allow a few minutes after the final Create Ceph OSD task completes for all OSDs to show up and fully initiate.

Please see the Manual Journal and OSD creation section in Management and Operation below for details on how to manually build the Journals, Storage Pools, and OSDs. Because of the number of steps required and complexity, the auto configuration tool can be used at any time which simplifies the OSD creation process.

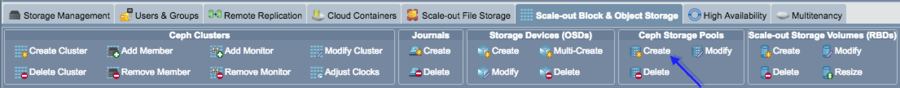

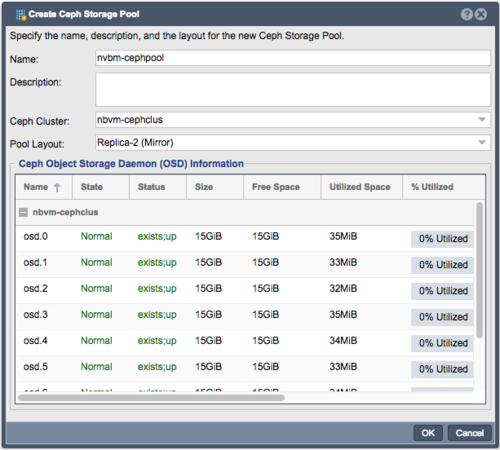

Create the Scale-out Storage Pools (Ceph Pools)

The Ceph Storage Pool represents the data container that RBD Scale-out Storage Volumes will be generated from. By default, the creation of a new Ceph Storage Pool will select all OSDs that are available in the Ceph Cluster. Once the Ceph Pool is created the Cluster health status will update to reflect the overall health of the cluster to include the health of the PGs associated with the new pool.

To create the Ceph Storage Pool begin by clinking Create button in the Ceph Storage Pools section of the ribbon bar:

The Create Ceph Storage Pool dialogue will pop-up:

- Name: A descriptive name for administrative purposes

- Ceph Cluster: Select the appropriate cluster if the Grid includes multiple Ceph Clusters

- Pool Layout: Use this to set your level of redundancy for the cluster.

- Options:

- Replica-2 - Ceph will maintain two copies of the data across the cluster

- Replica-3 - Ceph will maintain three copies of the data across the cluster

Note that in QuantaStor 4.0 and below, all OSDs in the cluster will be included in the pool, but the QuantaStor 4.1 release will include the ability to select specific OSDs to be used for data and other OSDs to be used as a fast SSD tier to accelerate read and write performance.

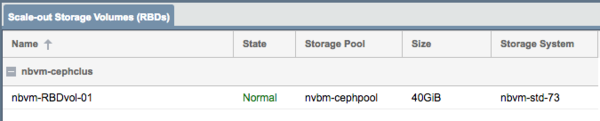

Provisioning Scale-out Storage Volumes (Ceph RBDs)

Provisioning iSCSI Storage Volumes from Ceph based Storage Pools is identical to the process used for ZFS based Storage Pools. You can use the Create Storage Volume dialog from the main Storage Management tab and select the pool to provision from. Note that the iSCSI initiator (VMware, Linux, Windows, etc) must be configured login to a QuantaStor network port (target port) IP address on each appliance associated with the Ceph Cluster that the RBD is a member of so that there are multiple paths to the storage. If only a single appliance connection is made there will be no automatic fail-over in the event of an outage.

To create Scale-out Storage Volumes (RBDs) begin by clinking Create button in the Scale-out Storage Volumes(RBD) section of the ribbon bar:

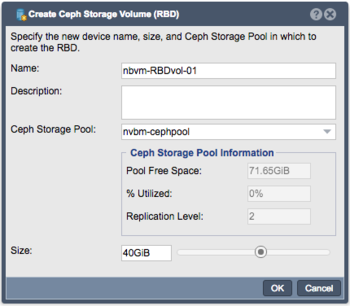

The Create Ceph Storage Volume dialogue will pop-up:

- Name: A descriptive name for administrative purposes

- Ceph Storage Pool: If multiple pools are available in the Grid, select the appropriate pool

- Ceph Storage Pool Information is displayed for informational purposes

- Size: Select the size of the Volume to be created. Note that all Ceph/RBDs are Thin provisioned

Management and Operation

All key setup and configuration options are completely configurable via the WebUI. Operations can also be automated using the QuantaStor REST API and CLI. Custom Ceph configuration settings can also be done at the console/SSH for special configurations, custom CRUSH map settings; for these scenarios we recommend checking with OSNEXUS support or pre-sales engineering for advice before making any major changes.

Capacity Planning

One of the great features of QuantaStor's scale-out Ceph based storage is that it is easy to expand by adding more storage to existing systems or by adding more systems into the cluster.

Expanding by adding more Systems

Note that it is not required to use the same hardware and same drives when you expand but it is recommended that the hardware be a comparable configuration so that the re-balancing of data is done evenly. Expanding can be done one system at a time and the OSDs for the new system should be roughly the same size as the OSDs on the other systems.

Expanding by adding storage to existing Systems

If you add more OSDs to existing systems then be sure to expand multiple or all systems with the same number of new OSDs so that the re-balancing can work efficiently. If your pools are setup with a replica count of 2x then at minimum a pair of systems with additional OSDs at a time.

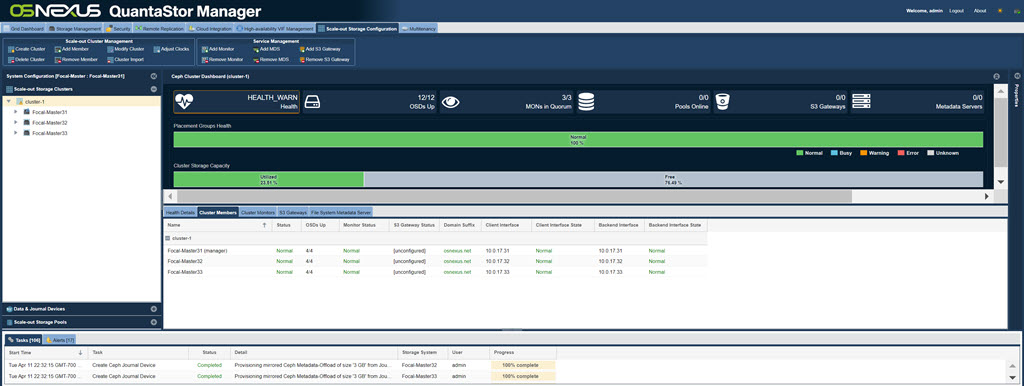

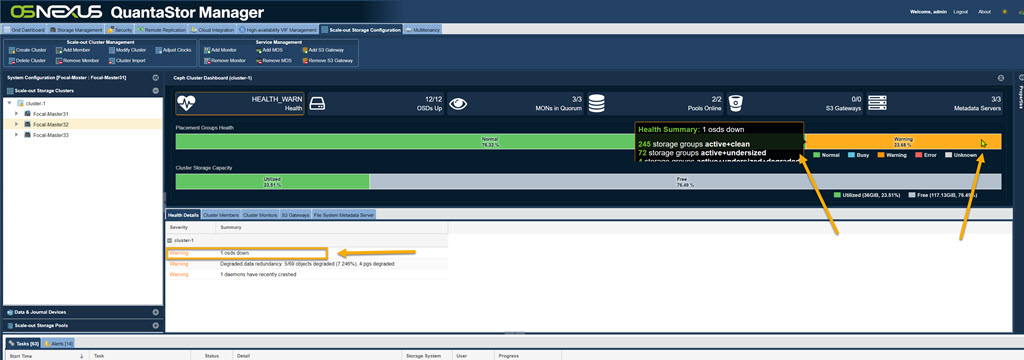

Understanding the Cluster Health Dashboard

The cluster health dashboard has two bars, one to show how much space is used and available, the second shows the overall health of the cluster. The health bar represents the combined health of all the "placement groups" for all pools in the cluster.

If a node goes offline or a system is impacted such that the OSDs become unavailable this will cause the health bar to show some orange and/or red segments. Hover the mouse over the effected section to get more detail about the OSDs that are impacted.

Additional detail is also available if the OSD section has been selected. If you've setup the cluster with OSDs that are using hardware RAID then your cluster will have an extra level of resiliency as disk drive failures will be handled completely within the hardware RAID controller and will not impact the cluster state.

Adding a Node to a Ceph Cluster

Additional Systems can be added to the Ceph Cluster at any time. The same hardware requirements apply and the System will need to have appropriate networking (connections to both Client and Backend networks).

To add an additional System to the Ceph Cluster:

- First add the System to the QuantaStor Grid

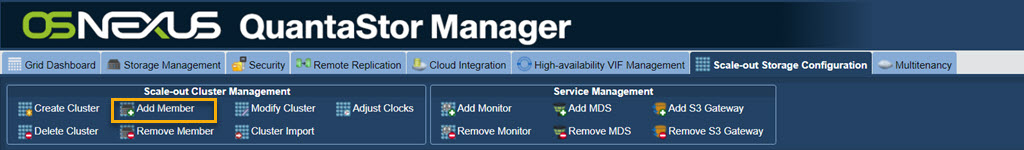

- Select Scale-out Storage Configuration --> Scale-out Storage Clusters --> Scale-out Cluster Management --> Add Member

The Add Member to Ceph Cluster dialogue box will pop-up:

- Ceph Cluster: If there are multiple Ceph Clusters in the grid, select the appropriate cluster for the new member to join

- Storage System: Select the System to attach to the Ceph Cluster

- Client & Backend Interface: QuantaStor will attempt to select these interfaces appropriately based on their IP addresses. Verify that the correct interfaces are assigned. If the interfaces do not appear, ensure that valid IP addresses have been assigned for the Client and Back-end Networks and that all physical cabling is connected correctly.

- Enable Object Store for this Ceph Cluster Member: Leave this unchecked if using as Scale-out SAN/Block Storage solution. Check this if using Object storage.

Adding OSDs to a Ceph Cluster

OSDs can be added to a Ceph Cluster at any time. Simply add more disk devices to existing nodes or add a new member to the Ceph Cluster which has unused storage. The new devices can be added as OSDs using the Create OSDs & Journals dialog as done previously. The process can be done by simply pressing the Auto Config button which will optimize the process.

Note that if you are not adding additional SSD devices to be used as journal devices for the new OSDs that you must check the option to Allow provisioning from pre-existing Journal Groups which will use existing unused journal partitions for the newly create OSDs.

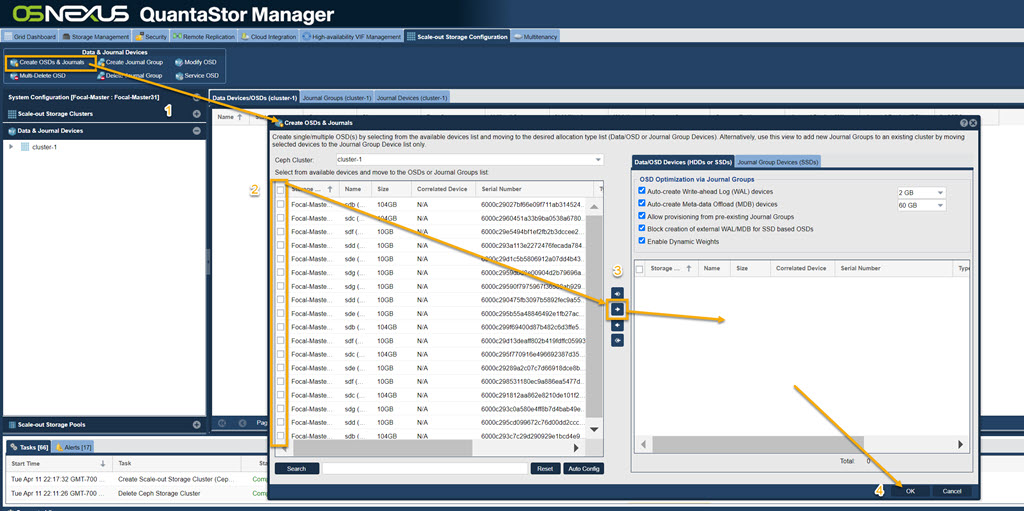

Removing OSDs from a Cluster

OSDs can be removed from the cluster at any time and the data stored on them will be rebuilt and re-balanced across the remaining OSDs. Key things to consider include:

- Make sure there is adequate space available in the remaining OSDs in the cluster. If you have 30x OSDs and you're removing 5x OSDs then the used capacity will increase by roughly 5/25 or 20%. If there isn't that much room available be sure to expand the cluster first, then retire/remove old OSDs.

- If there is a large amount of data in the OSDs it is best to re-weight the OSD gradually to 0 rather than abruptly removing it.

- In multi-site configurations especially, make sure that the removal of the OSD doesn't put pools into a state where there are not enough copies of the data to continue read/write access to the storage. Ideally OSDs should be removed after re-weighting and subsequent re-balancing has completed.

- Select the Data & Journal Devices menu and click on Multi-Delete OSD in the ribbon bar. From here you can checkmark the OSDs that you wish to delete. Once all the desired ones are selected press OK and it'll begin the deletion process.

- Deletion will take time, depending on how much data needs to be migrated to other OSDs in the Cluster

Adding/Removing Monitors in a Cluster

For Ceph Clusters up to 10x to 16x systems the default 3x monitors is typically fine. The initial monitors are created automatically when the cluster is formed. Beyond the initial 3x monitors it may be good to jump up to 5x monitors for additional fault-tolerance depending on what the cluster failure domains look like. If you cluster is spanning racks, it is best to have a monitor in each rack rather than having all the monitors in the same rack which will cause the storage to be inaccessible in the event of a rack power outage. Adding/Removing Monitors is done by using the buttons of the same names in the toolbar.